Create AI videos with 240+ avatars in 160+ languages.

Videos are meant to inform, engage, and entertain. But all that needs to happen in a way that's appropriate to the audience. For example, if an accident happens during a live Formula One race, the camera editor will moderate the footage to protect viewers and affected persons.

Video content moderation is a bit like digital gardening. It prunes the bad stuff so the good community can grow and flourish.

How does this whole moderation thing actually happen? And how do you know when it’s gone too far? Keep reading to find out what the latest video moderation methods are, and how they work.

What is video moderation?

Video content moderation is the process of reviewing and managing videos to ensure they stick to certain standards or guidelines. The goal is to filter out inappropriate content, such as violence, explicit material, hate speech, or even entertaining content that violates a platform's service terms. By doing so, content moderation:

- Protects viewers, a brand's image, or a platform's reputation.

- Ensures a safe, enjoyable user experience and legal compliance.

The more user-generated content a site publishes and hosts, the more complex and challenging moderation becomes. Video streaming platforms can easily be too aggressive and make users feel like their freedom of speech is limited.

For example, YouTube works with hundreds of human moderators who watch videos to ensure they’re safe for children. It has developed a handy resource center for parents on how to navigate privacy settings for their children's accounts. They also rely on AI tools to scan videos for adult content or to protect users from violent content.

Facebook's stance on moderating hate speech is another example, though a bit more controversial. They took steps to reinforce hate speech rules, and users can also report content they consider inappropriate for various reasons. Still, the platform was recently accused of double standards and biased moderation of political and war-related content.

Effective content moderation takes a balancing act. People will feel uncomfortable using the site if you don’t moderate enough. But overdo it, and you risk annoying them or worse, censoring their voices.

The solution to keep a platform safe for its intended audience is a "light touch" of moderation. Whether for social media platforms or employee training hubs, striking this moderation balance is essential.

How video moderation works

As there are many ways to make and share videos, there are also many ways to moderate content. Every platform that publishes video content considers the following questions when designing their moderation program:

- Who will do the moderation? Humans, AI, or both?

- When will moderation occur? Before or after publishing?

- Why do we moderate? For global or local compliance? Or to create a specific community?

- What consequence will we implement? What do we do with flagged content?

Let’s dive a little deeper into each of these questions.

1. Who's the content moderator?

Content moderation can be:

- Fully automated using AI, algorithms, and machine learning

- Managed entirely by humans

- A combination of technology and human efforts.

Human moderators range from employees to community members and even content creators who self-moderate. For instance, advertising platforms like Facebook and Amazon Advertising have humans review ads for legal compliance before publication.

Regardless of who’s moderating, the goal isn’t quality as much as it is to ensure that content is appropriate and law-abiding.

2. When does the moderation happen?

Moderation can be proactive (before publishing) or reactive (after publishing, in response to complaints).

Reactive or post-moderation is the most straightforward method. Some platforms have humans review recently published content but users can also report inappropriate content. Due to the vast volumes of content on social media platforms, many companies openly encourage moderation from the everyday user.

Proactive or pre-moderation is more complex and can go two ways. The content is either reviewed before it becomes publicly available (typically on advertising platforms) or before it’s even created by limiting content creators’ administrative rights.

Limiting creators is frequent in places like businesses where employees churn out training videos, and you need to flag inappropriate content and review it. So, every single video is reviewed before it goes live, and the folks making them know what’s allowed and what’s prohibited.

3. What’s the scope and severity of our moderation?

Ideally, content moderation should be global, applying uniform standards across all regions and demographics. However, sometimes it's localized and tailored to specific cultural, legal, or regional requirements.

An example of localized moderation involves offensive content related to Nazi symbolism and Holocaust denial or trivialization. In many countries, such content may be protected under free speech laws, whereas in Germany, it’s subject to the Criminal Code, and any video platform must quickly identify and remove such content.

4. What are the consequences?

Consequences almost always relate to:

- If this is a first-time or repeat infringement

- The severity of the infringement

In most cases, content will be removed and the user will receive a warning and guidance on how to improve in the future. In addition, their next few posts might also suffer from restrictions like limited reach or visibility. In the case of a severe or repeat offense, the platform might ban the user, and even report them to a relevant authority if the content calls for it.

How we moderate video content at Synthesia

As an AI video generator, we integrate moderation into our video creation platform. We focus on a two-level pre-moderation process, ensuring our users create responsible, respectful, and sensitive AI videos.

1st Level Moderation: Integrated human and automated moderation

Our moderation team uses a blend of automated detection tools and manual oversight to review content to prevent users generating videos that don't align with our content moderation guidelines.

We have clear policies on what’s a no-go.

Prohibited content includes topics we don't allow at all. Whereas restricted content includes sensitive topics that can’t be associated with our stock avatars to respect the individuality and opinions of those who represent the avatars.

Users can still include restricted content in their videos by creating their own custom avatars.

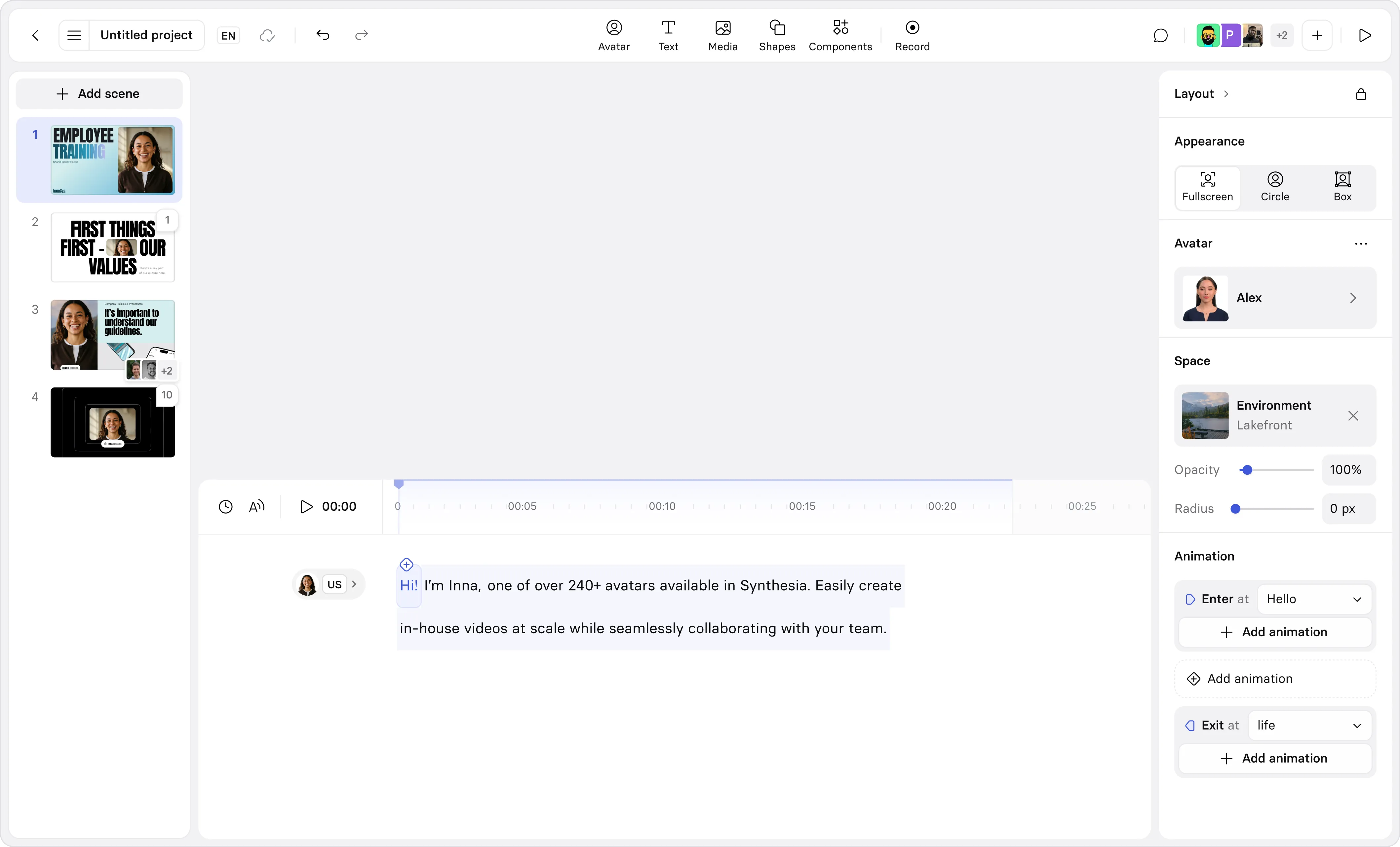

2nd Level Moderation: Workspace and Collaboration features

The Workspaces and Collaboration features in Synthesia are meant to make video creation similar to writing content in a Google document.

In your workspace, you can limit access and control who gets to do what, allowing some people to make changes and others only to view or comment.

Team members can work on a video simultaneously, offering different perspectives and feedback. This collective oversight adds an extra moderation layer, making the final product more polished and appropriate.

The step-by-step moderation workflow

When you create a video with Synthesia, here's how our moderation kicks in:

- You craft your video with the desired content and hit ‘Generate.’

- As your video processes, it goes through our internal moderation systems.

- If something is flagged, content goes to our Content Moderation Team for a final decision.

- If the content is cleared, we release it, and you're good to go. If not, you’ll get a notification explaining why and how to fix it.

- Breaking the rules or getting flagged repeatedly might lead to your account getting suspended.

Our policies cover areas like harmful behavior, disagreeable content, safety, and integrity. To us, moderation isn’t just about keeping things in line but also about fostering a creative, respectful, and safe environment for everyone.

Video moderation resources and support

Education is vital in content moderation, as it helps users understand why we have rules and how to follow them. In other words, it reduces friction points and gets people on board with moderation.

To learn more about our content moderation policies, read this guide or explore our short, interactive Why We Moderate course.

For extra content moderation resources, check out:

- Internet Watch Foundation (IWF): Provides key resources and reports on combating online child sexual abuse.

- Electronic Frontier Foundation (EFF): Offers insights into digital privacy, free speech, and censorship.

- Berkman Klein Center: Publishes research on internet governance, focusing on content moderation and digital policy ethics.

- Family Online Safety Institute (FOSI): Delivers online safety resources for parents and educators, promoting the importance of content moderation in the family and on youth-oriented platforms.

- Tech Against Terrorism: Offers guidance and workshops for tech companies on moderating content to prevent the spread of terrorism-related material online.

Join the future of responsible video creation with AI

Synthesia helps you shake up your video production and experiment with collaborative video creation.

Our platform, guarded by built-in video content moderation tools, is ideal for companies with extensive teams where multiple contributors must edit, comment, and enhance videos simultaneously.

Join us to streamline your content creation. You’ll get your team to collaborate better and produce impactful, responsible AI videos that hit the mark with your audience.

Head over to our free AI video generator to test out Synthesia for yourself.

Kyle Odefey is a London-based filmmaker and Video Editor at Synthesia. His content has reached millions across TikTok, LinkedIn, and YouTube, even inspiring an SNL sketch, and has been featured by CNBC, BBC, Forbes, and MIT Technology Review.