Beyond Compliance: Training Videos That Make Better Decisions Repeatable

Create engaging training videos in 160+ languages.

Several years ago, I led a working session with senior engineering managers on inclusive hiring. Their pipeline had become primarily referral-driven (a common pattern in tech), but the business impact was showing up in a place they couldn’t ignore: innovation was declining.

They were battling groupthink — most of the leadership group had similar educational backgrounds and career trajectories. A handful had even come up through the same large tech organization under the same manager. When we reviewed interview artifacts, we found a consistent pattern. Feedback wasn’t anchored to role outcomes or a rubric. It was anchored to an internal “expected” approach:

“They didn’t solve the problem the way I expected."

This problem wasn’t going to be solved with a lecture on affinity bias, though we did introduce the term. It required shared alignment on the source of the issue, followed by structured interventions, reinforced with feedback.

The team overhauled interviewing. They introduced mandated training for everyone involved to align on behavioral competencies. They made evaluation criteria explicit, and managers championed the reviews — reading notes before the debrief and giving feedback to keep the bar consistent.

That’s what good training does. It changes behavior in the moments that matter, and you can measure the difference. Training designed to build more diverse and equitable workforces should meet the same bar, whether or not it also supports compliance.

If the goal is better, fairer decisions at scale, training has to show people what to do. Compliance sets the baseline.

Video, paired with practice and feedback, is what makes behaviors visible and repeatable. So let’s talk about how to create quality diversity and inclusion training programs.

If compliance is the baseline, what should training improve?

Most people associate “DEI training” with compliance. It’s mandated. It’s annual. It’s the thing you click through to check a box.

And to be fair, compliance training matters. It sets the baseline for what’s expected, what’s prohibited, and what to do when something goes wrong. Your company probably has compliance training already (or you’re here because you need it).

But here’s the problem. Too often, it’s built to be completed, not applied. It becomes something people zone out through while trying to find the fastest path to “next.”

It doesn’t have to be that way.

You can make compliance training actionable and engaging. You can design it so people know exactly what “good” looks like, and what to do in the moments that actually shape culture.

If your goal is a culture where people stay and do their best work, policies aren’t enough.

You need to show how standards are upheld in everyday behavior: how managers give feedback, what happens when someone is interrupted, how harmful behavior is addressed, and what a good response looks like when someone raises a concern.

That isn’t lip service. That’s how standards hold up at scale.

What makes inclusive training videos effective?

Pick one moment that shapes outcomes, define the behavior you want instead, and make it easy to practice. We’ll use one consistent example throughout: an interview debrief where feedback drifts into “fit” and gut feel instead of evidence tied to role expectations.

- Start with one moment that matters

Instruction: Pick a real workplace moment where decisions get made and standards often drift.

Example: An interview debrief where feedback turns into “good fit” or “I liked them.” - Define the behavior change in one sentence

Instruction: Write an outcome you can observe.

Example: “Interviewers can redirect ‘fit’ language back to the rubric and ask for evidence before a decision is made.” - Make the standard explicit

Instruction: Agree on a simple standard that can be repeated word-for-word.

Example: “We evaluate against the rubric. We cite evidence. We don’t use ‘fit’ as a substitute for criteria.” - Show contrast: drift vs. good behavior

Instruction: Show the common failure briefly, then spend most of the video modeling the best response.

Example: “They didn’t feel like a good culture fit.” Redirect: “Which rubric criteria are you scoring, and what evidence supports that?” - Build practice into the decision point

Instruction: Put the practice prompt exactly where the mistake happens.

Example: Pause after “good culture fit” and ask learners to choose (or say) the best redirect line. Then explain why it works. - Add feedback and reinforcement

Instruction: Create a lightweight feedback loop that shows up in the workflow.

Example: Use a short debrief checklist and a manager habit (like spot-checking notes for rubric + evidence) to keep the standard consistent.

💡Tip: Structure your script scene by scene: moment → drift → model → practice → feedback → reinforcement.

How do I tailor training by role and region?

Inclusive training works best when it’s built around the decisions people actually make in their roles. A first-time interviewer needs behavioral interview questions, a few redirect lines, and a clear assessment rubric. A hiring manager needs to run a consistent debrief, coach interviewers, and protect the standard under time pressure. A talent partner needs to spot patterns across panels and reinforce calibration.

Here are a few best practices that keep training tailored without creating a separate program for every team:

- Anchor each role to one repeatable job-to-be-done

For interviewers, that might be “score against the rubric and cite evidence.” For hiring managers, it’s “run a debrief that produces a defensible decision.” - Give people usable language, not platitudes

Role-based training should include the exact words people can use in the moment. “Let’s anchor to the rubric—what evidence supports that rating?” is more actionable than “avoid bias.” - Add the reinforcement move only that role can do

Hiring managers can spot-check notes, reset subjective language in debriefs, and run calibration. Talent partners can audit patterns and nudge consistency across panels. - Localize the scenario, not the standard

Adapt language, examples, and cultural context by region, but keep the behavioral expectation consistent. The goal is to reduce message drift while making the scenario feel real to the audience. - Deliver it at the moment of need

Trigger role-based modules when someone becomes an interviewer, starts managing, or opens a hiring loop. That’s how training becomes performance support instead of an annual event.

💡Tip: If you want these standards to hold up across teams and regions, the training has to be accessible.

Now let’s turn this into a first draft using a template. Pick one workplace scenario and write a video-ready script you can test, refine, and scale.

Use a template to build your first draft

One of the most effective ways to implement training is to include real practice and a real feedback loop. People change when they can rehearse a response, get coached on what “good” looks like, and apply the standard in the next real moment.

The easiest way to build that kind of training is to start with a single scenario and script it using an editable template, like the one below. Templates — whether shared across the company or customized with your branding — keep the structure consistent while still letting you tailor the content by role and region. You can reuse the same scenario pattern, swap the talk track for an interviewer versus a hiring manager, and localize language and examples without rebuilding the module from scratch.

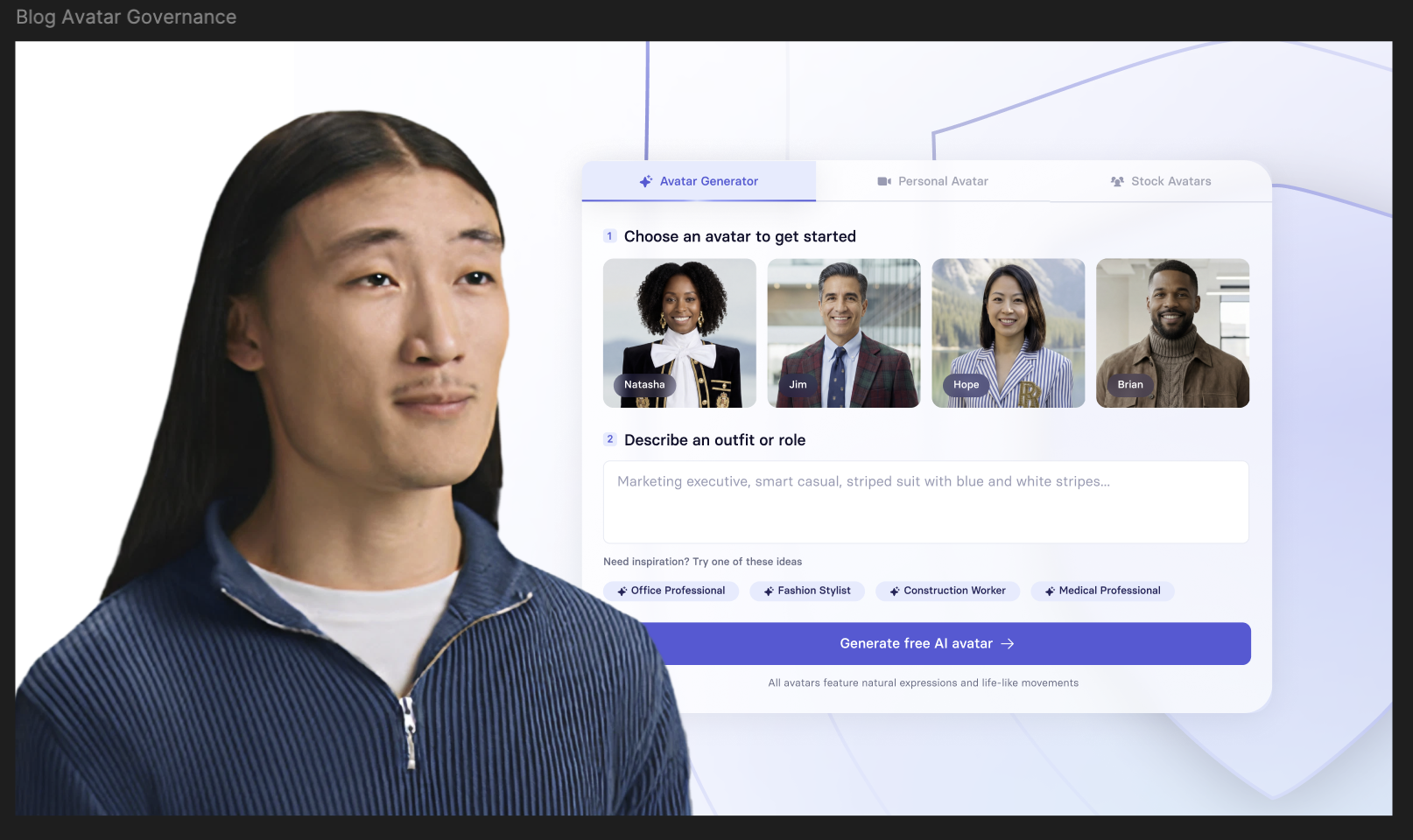

💡Tip: Want a quick first draft? Paste the scenario we shared (or your own) into Synthesia’s text-to-video tool.

How do you measure whether inclusive workplace training is working?

If you only measure completion, you’ll only optimize for completion. Instead, measure across three layers: whether people adopt the behavior, whether the workflow reflects the standard, and whether outcomes move over time. This “stack” approach is consistent with research that calls for clearer outcomes of interest, better proxy metrics, and more rigorous evaluation.

- Adoption signals

These tell you whether people are using the standard you trained. In an interview debrief example, adoption signals might include whether interview notes reference rubric criteria, whether evidence is cited, and whether “fit” language declines in favor of observable behaviors. These are the earliest signs the training is being applied. - Process health

These signals tell you whether the organization is getting more consistent and fair in the way it makes decisions. For hiring, this might show up as more consistent scoring patterns across interviewers, clearer debrief outcomes, and fewer decisions driven by subjective “expected approach” preferences. - Outcomes

Outcomes matter, but they move slowly and are influenced by many factors. Look for directional movement in indicators like retention by team/level, internal mobility, candidate experience signals, or patterns in employee relations concerns.

💡Tip: Pick 1–2 adoption signals and 1 process signal for each training scenario, then review outcomes quarterly to see whether the system is trending in the right direction.

Who to partner with for meaningful measurement

Measuring whether inclusive workplace training is working is a cross-functional effort. The strongest signals already exist across the teams that own the workflows and outcomes you’re trying to change. The goal is to align on a small, shared set of indicators, then review them on a steady cadence with the partners who can actually act on what you learn.

- Talent Acquisition and Recruiting Ops

They can tell you whether interviews are structured in practice, whether rubrics are used consistently, and whether debrief notes are moving from “fit” language to evidence tied to criteria. They’re also closest to pipeline patterns, shortlist decisions, and interviewer calibration signals. - HR Business Partners

HRBPs often have the clearest view into where standards drift, which teams need reinforcement, and what themes are emerging in manager conversations before they show up in formal channels. - Employee Relations, Legal, and Compliance

They can help you track whether people know the right pathways, whether responses are consistent, whether issues are escalated appropriately, and whether documentation quality is improving. Here, measurement should focus on response quality and consistency, not just incident volume. - People Analytics

They can help you triangulate signals across sources, avoid over-attributing outcomes to a single intervention, and spot patterns by team, role, or location that suggest targeted reinforcement.

💡Tip: Review your Voice of Employee (VoE) data (exit interviews, engagement surveys, and manager surveys). These sources often surface early signals that day-to-day standards aren’t being applied — well before you see it show up in performance or attrition trends.

The goal is to triangulate measurement: pair what people say (VoE) with what teams do (adoption and process signals) and what changes over time (outcomes). Use the table below to choose what to track for each training use case. Start small: pick one adoption signal, one process signal, and one outcome signal, then review them with the partner teams on a regular cadence.

Once you can build and measure training, you need governance to keep standards consistent. Otherwise, content goes stale and behavior drifts.

Who owns the standard (and keeps it current)?

In most organizations, different teams own parts of this work. “DEI training” often overlaps with compliance requirements, legal reporting obligations, employee relations processes, and manager enablement. To keep the program sustainable and impactful, governance matters: who sets the standard, who teaches it, who reinforces it, and who updates it when policies (or circumstances) change.

Assign clear owners

Assign owners across four responsibilities so the program stays accurate, usable, and adopted: policy accuracy, learning design, workflow adoption, and measurement.

- Policy accuracy — Legal / Compliance / Employee Relations

Maintain definitions and standards, confirm reporting pathways, validate escalation guidance, and keep “what happens next” steps current. - Learning design — L&D (program owner)

Design the learning experience, set rollout and reinforcement cadence, and ensure each module includes practice and feedback. - Workflow adoption — Talent Acquisition, HRBPs, People Leaders

Embed the standard where decisions happen (debriefs, feedback cycles, meetings), reinforce it through coaching and routines, and reduce drift over time. - Measurement — People Analytics (with L&D + partners)

Define adoption/process/outcome signals, pull and interpret trends across sources, and help teams review results without over-attributing impact to a single intervention.

Create a scenario library

Create a small library of approved scenarios that teams can reuse and localize while keeping the underlying standard consistent. For each scenario, store:

- the behavior standard (“what good looks like”),

- the script and role-specific talk track,

- the practice prompt and feedback guidance,

- the reinforcement artifact (checklist, rubric, manager prompt), and

- the signals you’ll track (adoption, process health, outcomes).

Use templates to scale and localize

Templates make governance practical. Whether you use a shared template or a custom branded version, templates keep structure consistent while still allowing adaptation by role and region. You can reuse the same scenario pattern, swap the talk track for an interviewer versus a hiring manager, and localize language and examples without rebuilding the module from scratch.

Set an update cadence

Training stays credible when it stays current. Set a simple process for updates:

- Update triggers: policy changes, recurring ER themes, changes in hiring or performance processes, patterns in Voice of Employee data, and shifts in role expectations.

- Review cadence: review higher-risk modules more frequently; review skills modules on a steady cycle; update as needed when triggers arise.

- Change log: document what changed and why, especially for global audiences and regulated environments.

Localize without changing the standard

Localization is most effective when it adapts delivery without changing expectations.

- Adapt language, examples, and cultural context so scenarios feel real.

- Keep the behavioral standard, reporting guidance, and evaluation criteria consistent.

- Route meaning-changing edits through the policy owner to prevent message drift.

Make reinforcement operational

Reinforcement is what keeps standards alive after launch. For each module, pair the video with:

- one artifact used in the workflow (checklist, rubric, notes template), and

- one reinforcement behavior owned by managers or partners (spot-checking notes, running calibration, reinforcing meeting norms).

Putting it into practice

- Pick one high-impact scenario

Choose a moment where standards drift (for example: interview debriefs that slip into “good fit,” meeting interruptions, or a manager’s first response to a report). - Define the behavior you want instead

Write one observable outcome in plain language (e.g., “Redirect ‘fit’ back to the rubric and evidence before deciding.”). - Draft the script using the scene flow

Moment → drift → model → practice → feedback → reinforcement. - Generate a first draft video

Paste your scenario and script into Synthesia’s text-to-video tool to create a version you can review and iterate on. - Add reinforcement + measurement

Pair the video with one workflow artifact (checklist/rubric) and track one adoption signal, one process signal, and one outcome signal with your partner teams.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What does DEI mean?

What’s the difference between diversity, equity, inclusion, belonging, and accessibility?

Diversity is representation. Equity is removing barriers and improving fairness. Inclusion is enabling full participation without bias. Belonging is the felt experience of inclusion. Accessibility focuses on removing barriers for people of all abilities, including through accommodations and inclusive design.

What’s the difference between compliance training and DEI training?

Compliance training sets baseline expectations for policies, reporting, and prohibited conduct. DEI/DEIB/DEIA training is most effective when it builds practical workplace skills, like fair evaluation, inclusive collaboration, and effective intervention in real situations.

Why use video for training on inclusion and fairness?

What should inclusive workplace training videos include?

How do you measure whether inclusive workplace training is working?

How does Synthesia support accessibility and inclusion in video training?

Video training is only inclusive if people can actually access it. With Synthesia, teams can build accessibility into the workflow by adding closed captions (so learners can follow along with sound off, use captions as an accommodation, or review key phrasing precisely), and by localizing training for global teams through translation and multilingual delivery.