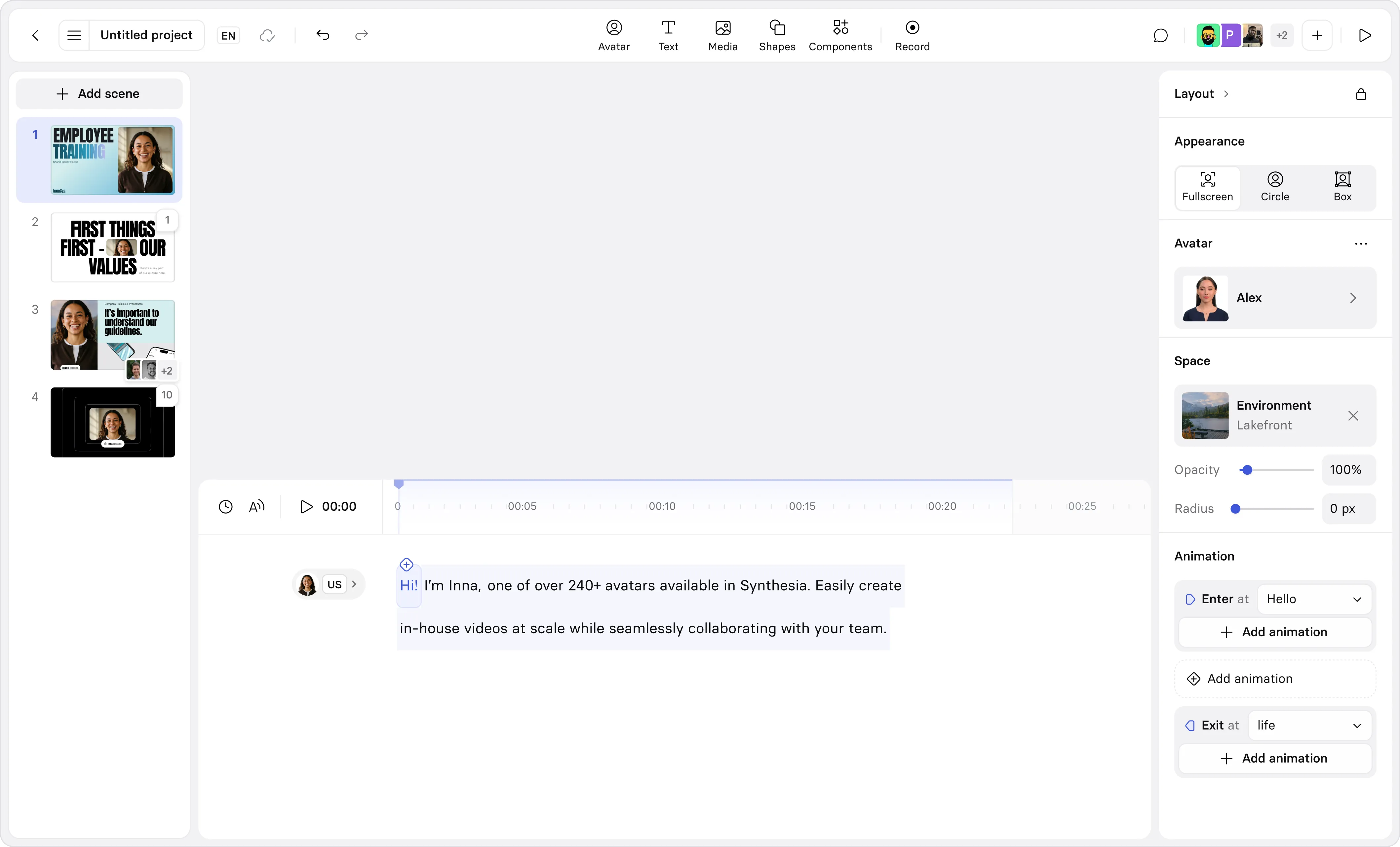

Create AI videos with 240+ avatars in 160+ languages.

Sufficiently advanced technology is indistinguishable from magic, said the late Arthur C. Clarke. And he was right; throughout history the most transformative technologies, from the printing press to the camera to the internet, did seem magic and almost otherworldly at the time of their invention.

Today, we’re witnessing seemingly magic technology being developed at a rapid pace: AI is now able to generate images, music, video and even conversations that are incredibly lifelike. We’re not many leaps away from being able to make entire Hollywood films, or anything else we can imagine, on a laptop without the need for any cameras, actors or studios.

The creative possibilities of AI are endless, ranging from individuals' artistic expression to large companies making training and learning content for thousands of employees. Billions of people will be empowered to realize their creative vision without the cost and skills barriers of today.

But as with any other powerful technology, it will also be used by bad actors. AI will amplify the issues we already have with spam, disinformation, fraud and harassment. Reducing this harm is crucial and it’s important that we work together as an industry to combat the threats AI presents.

We believe that the main vectors to reduce harm will be public education, technical solutions and the right regulatory interventions that will allow everyone to benefit from the immense opportunity of AI-generated content while reducing harm caused.

Synthetic Media Vs. deepfakes

Synthetic media is shrouded in myths, making it difficult for regulators to properly address both the opportunities and threats. So far, the public narrative around AI has focussed on deepfakes; a term generally used to describe nefarious use of AI to impersonate someone else. That makes sense – it’s a very important topic to address as this is a real threat that is already causing harm.

But it’s important that deepfakes, which is a particular use of a general technology, doesn’t become a red herring for the much more fundamental technological shift towards AI-generated content that is underway. By focussing regulation on the specific algorithms used, rather than the intent, we run the risk of raising many more questions than we answer.

For example, many of the cases labeled as deepfakes are not actually AI-generated at all. The fake video of Nancy Pelosi appearing drunk was created by simply slowing down footage with regular video editing. Other deepfakes have been created with traditional Hollywood visual effects technologies that don't use any AI at all and non-consensual pornography created with simple photo editing tools has been around for decades.

Would we call these examples deepfakes? Are Photoshopped images deepfakes? Is it a deepfake when you use your smartphone to remove a bystander in a family photo? Or when you use a Snap filter on your selfie to alter your appearance?

How do we draw the line between what is and isn’t AI-generated?

Answering these questions becomes increasingly complex when you factor in the myriad of useful applications of AI-generated content that is already invisibly present in our daily lives. The fixation on ‘deepfakes’ fails to recognise that these technologies are already all around us and that they will become a fundamental part of many products in the future.

Voice assistants, Photoshop, social media filters, meme apps, visual effects tools and Facetime calls all use synthetic media technology. For context Reface.ai, a consumer app, generates 50 million video memes a month; at Synthesia, a B2B product, we generate more than 3000 videos for our enterprise customers every day. And we’re just scratching the surface.

It is also important to recognize that almost all misuse of deepfake technology today does not originate from commercially available products but open source projects like DeepFaceLab which are almost impossible to regulate. In fact, almost all of the largest platforms for AI content creation safeguard their product with either content moderation or making it an on-rails experience.

Regulation

It is ultimately intent, not the technical details, that defines malicious use of any technology, from AI to cars to smartphones. deepfakes has not created forms of crime, but will amplify existing. Impersonation, for example, is not a new threat enabled by deepfakes: people have been faking emails, physical letters and documents for centuries.

The wide spectrum of applications and implementations for AI technology makes it very difficult to blanket regulate specific algorithms. We recommend a regulatory approach that focuses on intent but enables harsher penalties if advanced AI manipulation has been used.

Focussing regulation on intent removes the complexity in defining a technology that is and will be ubiquitous. Instead, we should focus on updating our existing legal framework. In the case of AI technology, there are obvious cases of bad intent that are already criminalized today:

- Bullying or harassing

- Discriminating against minorities

- Defrauding individuals or businesses

- Meddling in our democracy

These are not new issues and most countries already have legal frameworks in place.

Unfortunately there are obvious gaps in today's regulation that can make it difficult to prosecute perpetrators. These are the first things we need to fix.

For example, sharing altered nudes of someone is not criminal in the UK today despite it clearly being a bad faith action. For bullying and harassment some protections exist, but they are hard to enforce. In the US, victims of cyber harassment can sue for both civil and criminal remedies, but you need deep pockets to build a case and the victim will have to endure a long court case that can be mentally drained.

So, What Should We Do?

- Existing laws need to be updated for the digital age. Regulation can help tackle obvious misuse, but only if we close the gaps and make the laws easy to enforce. We strongly believe that these should focus on intent, rather than attempting to define specific technology or scenarios.

- We believe it is worth considering if using AI to fake harmful imagery should enhance penalties, in the same way hate-motivated crimes amplify penalties for existing crimes.

- We believe companies should take reasonable steps to prevent misuse of the technology such as employing content moderation and identity verification/protection.

The right regulation will help curtail misuse and incentivise companies to safeguard powerful technology. But it will also ensure that powerful AI tools are accessible for the public to use and interact with. Not just for the economic value, but because consuming and creating AI-generated content is ultimately the best way to educate the public at scale.

Every time someone creates a video with Synthesia or makes a meme with Reface it’s one more person, and whomever they share it with, who intrinsically understands how these technologies work – and the more we increase the population-level literacy in AI, the better we are protected against bad faith actors.

Victor Riparbelli is co-founder of Synthesia, launched in 2017 with AI researchers. With a decade in tech entrepreneurship, he combines technical expertise and academic insight to advance AI video, focusing on ethical and responsible innovation.