Launching a new framework for Responsible Practices for Synthetic media with the Partnership on AI

Create AI videos with 240+ avatars in 160+ languages.

At Synthesia, our mission is to make video creation easy for everyone. To democratise content creation and realise the potential of video - with our eyes wide open to the important ethical questions surrounding generative AI.

That is why we are proud to be a launch partner to the Partnership on AI (PAI) on Responsible Practices for Synthetic Media, the first industry wide framework for the ethical and responsible development, creation, and sharing of synthetic media.

After a drafting process of over a year, and the input of more than fifty global institutions, this week we joined the PAI to launch the framework in New York, alongside colleagues from Adobe, BBC, Bumble, CBC/Radio-Canada, D-ID, OpenAI, Respeecher, TikTok, and WITNESS.

Our collective work serves as a guide to devising policies, practices, and technical interventions that interrupt harmful uses of generative AI. It provides guidance to all stakeholders involved in building, creating and publishing AI-generated content. It will be particularly useful to those building the foundational platforms on the Generative AI era.

A framework in practice

The launch of the framework was a good opportunity to take stock of all the safety features we’ve implemented at Synthesia, to ensure we build the safest and most trusted AI video platform on the market. In the following paragraphs we go through the main themes of PAI’s framework and discuss our experience building ethics into every product and business decision.

Business model alignment

The first and maybe most important aspect is aligning business models with ethical goals. Different business models provide different incentives. Our enterprise SaaS model means that trust and sustainability are critical to our success as a platform. We aren’t incentivised in the same way as an engagement-based ad model, for example.

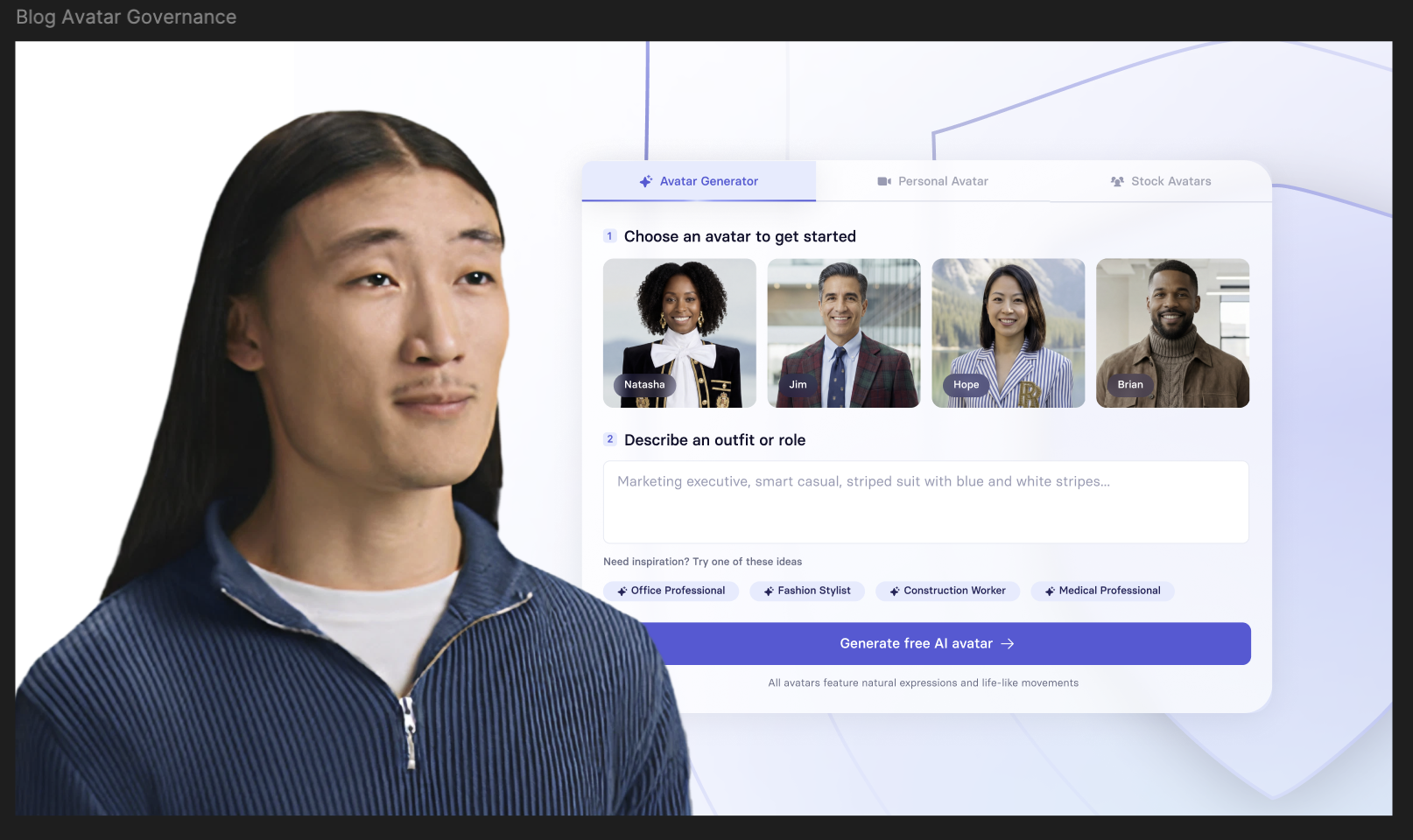

Consent

PIA’s framework provides specific recommendations for informed consent, both for creators and those depicted. Our approach at Synthesia has been to only create an AI avatar with the human’s explicit consent. This principle has really become core to our culture, with employees referring to it daily when making decisions.

In our experience, the best way to implement such principles is by building gatekeeper processes around them. For example, we only start processing a custom avatar after a KYC-like check, to ensure that the person submitting the request is the person in the footage submitted.

Preventing harm

A key consideration of PAI’s framework is preventing the creation and distribution of content that might cause harm. We take a stricter approach here than most, both in terms of access and content moderation.

We offer an ‘on-rails’ experience, which enables users to create content within the constraints we provide - customers don’t get access to the underlying AI technology.

Each video produced with Synthesia undergoes our moderation process, which uses both technology for automatically flagging content and human reviews. We have a list of prohibited use cases in our Terms or Service that we strictly enforce and we don’t allow videos that contain any elements of harassment, deception, discrimination, or harm.

We’ve also decided to restrict the creation of news-like content on our platform unless we have verified the credentials of the customer. When it comes to political or religious content, we request that our clients use a custom avatar to express their opinions through their own likeness. Although no moderation system is perfect, we're proud of our efforts so far.

We also moderate every single video generated with Synthesia. Our Terms set out prohibited use cases that we never allow. We strongly believe that videos containing harassing, deceptive, discriminatory or harmful elements should not exist. Some believe our stance to be too draconian, but we believe it is the right approach to build a healthy ecosystem. For political or religious content, we ask our clients to use a custom avatar, so their opinions are expressed with their likeness. While no moderation system will ever be perfect, we are proud to be one of the few creation tools to actively moderate. A role historically deferred to social media platforms only.

For our enterprise clients, we provide further brand safety features, such as premium avatars, available only on an enterprise plan. Putting the PAI framework into practice comes down to these single decisions adding up to a safe platform.

Transparency and disclosure

Direct and indirect disclosure is another key theme in PAI’s framework. In our experience, the efficacy of these solutions depends on the context. In certain cases, such as our free demos, we apply watermarking and clear disclosure that the video was generated using Synthesia. However, transparency needs to be addressed across not only creation tools, but also distribution.

Technical solutions and bad faith actors are in an eternal cat-and-mouse game, with digital fingerprinting trying to keep up with the novel ways of deleting them.

Technical solutions are definitely part of the solution, yet our view is that the most powerful lever here is public education and industry-wide collaboration to make sure that creation tools and distribution platforms all work together to ensure platform safety. This is the main reason why we have been working with organisations like the Partnership on AI, systematic issues require system-wide collaboration.

Never truly done… Our plans for the future

In the same way that industry guidelines will continue to develop, so does our approach at Synthesia.

We are expanding on our approach to add further hurdles to misuse - for example we will verify the credentials of clients who want to create news content using our technology - and continue investing into our Trust and Safety Ops team.

Our work with the PAI is indicative of our approach of collaborating with expert partners wherever we can. We’re excited about the potential of partnerships like these, where we can contribute to curbing the creation and spread of abusive content through better collaboration.

We recognize that collectively we are at the forefront of a rapid evolution in synthetic media and AI governance, and we are determined to live up to that challenge and play a pioneering role in championing its responsible use. Watch this space for more.

Victor Riparbelli is co-founder of Synthesia, launched in 2017 with AI researchers. With a decade in tech entrepreneurship, he combines technical expertise and academic insight to advance AI video, focusing on ethical and responsible innovation.