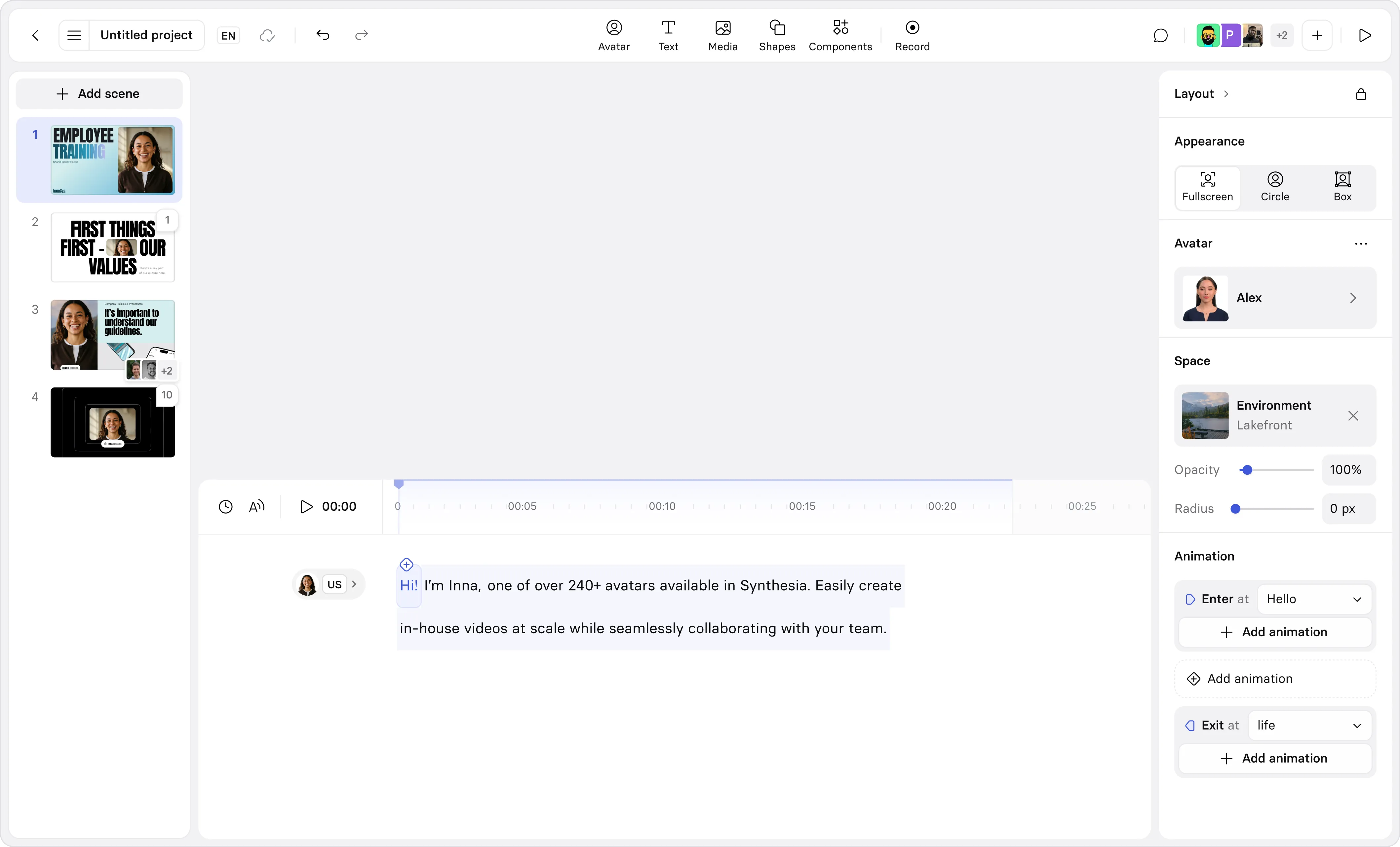

Create engaging training videos in 160+ languages.

At the end of a manager development program I facilitated, our team would hand out surveys and cupcakes.

The surveys asked questions like, "What was most useful?" and "What would have made this program better?"

We knew if we didn't capture this feedback right then, we wouldn't get it all. If someone needed to catch a flight or duck out for a meeting, we could guarantee we wouldn't get their feedback (despite repeated follow up pleas over Slack).

The survey was a snapshot of participants' sentiments. It told us if people enjoyed the learning experience. And they did, overwhelmingly. But we struggled to connect that development program with other data.

Over time, we tried to close this measurement gap. But the reality was our organization didn't have the data maturity to make this possible. As a scale-up, distributed around the globe, we had ever-changing systems (and teams). Data hygiene was a work-in-progress.

Truthfully, we never got the evidence we needed to prove the program's impact. That's what pushed us to figure out what good enough measurement looks like.

How to calculate training ROI

Before I share what good enough measurement looks like, I want to align on how to calculate training ROI.

The simplest formula to remember for calculating training ROI is:

ROI (%) = ((Total Benefits − Total Costs) ÷ Total Costs) × 100

Where Total Benefits are measured through indicators such as:

- Productivity gains (e.g. time to proficiency improvements)

- Reduced errors or rework

- Faster time-to-productivity

And Total Costs include:

- Direct spend (e.g., vendor fees)

- Internal labor

- Learner time

So, if you were running an onboarding program where Total Benefits = $200,000 and Total Costs = $105,000, your ROI would be 90.5%.

(I do a deeper dive into how to calculate the costs for this example in this post on building an L&D budget.)

What is a good training ROI

Even when a metric moves in the right direction, separating training's contribution from everything else happening in the business is genuinely difficult. A re-org, a market shift, or a new manager can impact the ROI.

I should warn you, most of the time the ROI is not going to look that great. Don't panic if your percentage is much, much lower.

And that's partially attributable to the vague nature of Total Benefits. If you read the sample indicators above and thought, "Wait, how do I translate reduced errors or time-to-productivity into a number?" — you're not alone.

It is a LOT of work to take a learning program's objective and translate it into business KPIs you can measure with confidence. There’s also little research validating what good training ROI is. I’ve seen claims that 25% is good ROI.

The reality is that ROI varies based on program complexity, cost inputs, and how benefits are measured. That's why right-sizing your measurement approach to the training matters.

If you're running a robust onboarding program every two weeks, you're likely investing substantial resources in the delivery. It is worth validating the program is driving the learning objectives you've identified with a comparably robust method like this ROI formula. It helps you make the business case for the program to your stakeholders.

If you're piloting a program with one team and the focus is feedback, you likely don't need this type of calculation. What you need is something good enough.

What “good enough” measurement looks like

Good enough measurement is something that gives you confidence to make a decision. That means you have enough evidence to decide whether to keep (and perhaps scale), revise, or stop a training program.

Here are two approaches to good enough measurement.

- Snapshot measurement

If this is a low cost/risk/visibility program where you need quick inputs to decide whether or not something is landing, follow this approach.

Measure reach (who participated and who didn't complete it), one signal that shows understanding, and one indicator that the desired behavioral change is starting to show up at work.

The goal with a snapshot measurement is to say the training landed, or it didn't, based on repeatable inputs.

Example: You pilot a compliance policy refresher to your Legal team. 9 out of the 10 team members completed the training over a 2 week period, and the average assessment score was 84%. The department lead confirms they're receiving fewer questions related to this policy.

- Impact measurement

Use this when you can define one observable behavior the training program is meant to change, and observe that behavior through one workflow-related signal and one people-related signal.

The goal with impact measurement is to be able to report something like this: "We expected behavior X to change; we see evidence it did (or didn't); metric Y moved in the expected direction (or didn't). Here's what we'll adjust next."

Example: You pilot a feedback training program with 50 managers. You conduct listening sessions with HR Business Partners to ask about feedback-related escalations, and conduct a pulse survey of their direct reports. You find the training isn't driving the change you planned for, so you revise it before rolling out to the full organization.

How to pick the right signals

A good signal is observable evidence that the behavioral change you're trying to influence through training is showing up at work.

It is repeatable, stable, and actionable. You can collect it consistently, compare it over time, and do something with what it tells you.

Some observable, repeatable, and stable signals I've seen include:

How Avetta measured training ROI

Avetta, a global leader in supply chain risk management, needed scalable training to support 150 support agents during onboarding and with just-in-time knowledge for customer interactions.

They measured ROI of their video-based training by focusing on three metrics: ramp-to-proficiency, call handling time, and interaction quality. These three metrics vary in complexity, but all met the bar: repeatable, stable, and actionable. (Read the full case study below.)

Over time, if your training evolves into a high cost/visibility/risk initiative with clear benchmarks you can track and measure over time, you can transition to using the ROI formula.

You'll know it's time when you can document the assumptions behind your ROI — how you estimated costs and plausible explanations of changes — and offer a range from conservative to optimistic because you've documented how the inputs vary.

How to present training ROI to leadership

Odds are, you need to share the results of your training ROI with someone in leadership. You don't need a fancy slide deck, and you don't need to reinvent the format every time. Create a template you can repurpose for every program.

A strong executive summary should be reviewable in a few minutes, cover what changed, what you invested, and any assumptions, and always end with a decision and a next step.

Consistency makes it easier for leadership to review quickly and know where to focus their questions or feedback.

Assumptions log

If you're using the training ROI formula I shared above, I recommend keeping an assumptions log to document the decisions you made along the way, and any judgment calls about data. This should be something you can append to a summary (if asked) so that someone else could follow your reasoning.

How to sustainably measure training ROI

I know how frustrating it can be to improve measurement when you're also pressured to continuously deliver. It might be easy to conduct a snapshot measurement for one program, but when you try to scale that across 100 different trainings, it can be overwhelming.

Sustainable measurement is about consistency and accountability. Wherever possible, distribute the work among your team. Measurement is everyone's job in L&D. Use AI to help you automate data collection or analysis or set up reminders.

The goal is evidence-based decisions. The cupcakes are optional.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

Do I need ROI for every training?

No. ROI is a high-effort measurement standard that only makes sense for certain programs. For most training, a lighter measurement approach is more sustainable and still gives leaders enough signal to decide what to do next.

How do I choose the right measurement approach?

Choose based on stakes and measurability. If the program is high-cost or high-visibility and you can track a business metric with a baseline, use an ROI-style approach.If outcomes are real but harder to monetize, measure behavior change and one business-adjacent metric. If you need a fast read, measure reach and an early signal you can collect consistently.

What is the Kirkpatrick model for training evaluation?

The Kirkpatrick model evaluates training across four levels: reaction, learning, behavior, and results. Most teams measure level 1 and stop there.The model's value is in pushing measurement toward levels 3 and 4, where the evidence is harder to collect but more meaningful to leadership. For a detailed breakdown, see our L&D budget guide.

What is the Phillips ROI methodology?

The Phillips method extends Kirkpatrick by adding a fifth level: return on investment. It converts business impact into monetary value, compares it to the total cost of the program, and expresses the result as a percentage. It also includes a step for isolating the program's contribution from other factors, which is what makes the result defensible to Finance.The Philips ROI methodology is most appropriate for high-cost or strategically visible programs. For a worked example, see our L&D budget guide.

What is a good ROI for a training program?

It depends on the program type and what you included in your cost calculation. A n onboarding program that accounts for learner time, SME input, and tooling will show a different ROI than a compliance refresher measured on completion alone.

According to the Phillips ROI Institute, organizations typically apply full ROI analysis to 5–10% of their programs: the high-cost, high-visibility ones where a quantified business case is expected. For those programs, the goal is a credible estimate based on consistent inputs and documented assumptions. That's what makes it reviewable.

What if I can't confidently say training caused the change?

That's common. Don't force certainty. State what else could have contributed, describe how you estimated training's contribution if you did, and use a range when inputs are uncertain so the summary stays credible.