Create engaging training videos in 160+ languages.

Have you sat through a training that felt like a waste of time? The kind where your attention starts to fade because the content feels generic or the facilitation is less than inspiring.

As an L&D practitioner, that's probably the last thing you want people to say about your training. You want to design engaging experiences that capture attention and, most importantly, apply to real work.

What makes training engaging?

Training is engaging when it earns attention through relevance, invites participation, and supports follow-through in real work.

If you're thinking that definition is pretty vague, you're right. You can design a compliance course around a realistic scenario and ask learners to make a decision, and it can still struggle to hold attention.

That's because interactivity is often conflated with engagement.

Interactivity is about how the learning experience is designed. It shows up in the structure. Are there moments where someone needs to respond, make a choice, or work through a scenario? Are they doing something as they go, or just watching?

Engagement is about what’s happening in someone’s head while they go through it. It’s the degree to which they’re actually thinking about and applying what they’re learning. You can add clicks and choices to a course and still end up with something that feels passive if those moments don’t require real thought.

9 tactics to make training more engaging

Engagement tactics vary by the training medium. My strategy for facilitating a small group workshop looks different from designing an async training video for 1,000 account executives. That's because I'm designing with different constraints. With a small group workshop, I can read the room and pivot if something isn't landing (Benjamin Zander refers to this as seeing if the "eyes are shining" — you can tell when someone's eyes are glazing over). With an async video, I know I'm competing against message pings, texts, maybe even a loud coworker or demanding pet.

So, the way I want to share engagement tactics is by focusing on three things that matter across all mediums: attention, thinking, and application.

Training often starts like a novel, with exposition. As a book lover, I'm all about exposition, but as an L&D practitioner, I know too much will lose people. If I spend 15 minutes of an hour session explaining why we're here, I've lost the room.

If attention gets people in, thinking is what makes them commit. You need to move beyond activity, like softball questions, and toward learning that challenges people to think critically.

Unfortunately, you can hold attention and encourage thinking and still fall short of your objective. You see this after the session ends, when people go back to work and nothing really changes. To close that gap, training has to be built around application in real work.

Here are tactics for designing more engaging training, starting with capturing attention.

1. Open with purpose

Open with why the session matters before expanding into background. In a live session, that might mean naming the goal for the time together and the decision or skill people will leave with. In self-paced content, it might mean a clear outcome statement or opening scenario. Either way, get right into it.

2. Show what's at stake

After you've identified the purpose, show what's at stake. I call this the so what. Why should someone spend their precious time in this training? What's in it for them?

The 'so what' should be measurable and tied to real impact: for the business and for the learner. For instance: "This training will help you reduce customer cancellations by improving how you handle product dissatisfaction. That directly affects your performance review." Be a compelling marketer.

3. Weave in real examples

To keep attention focused, you need to design content with real examples. Hypotheticals don't cut it. That means doing discovery first: a pre-training survey or a few listening sessions with team leads to surface the decisions people are actually struggling with and the situations they encounter most. (AI can help you spot patterns in what you find.)

From there, build those patterns into the training. In a live session, ask people to share an example from their own experience before introducing new content. In async training, add reflection prompts that connect what learners are seeing to something real in their work. The goal is to help people see themselves in the training.

Once you have the foundations for engaging training, you can start designing for deeper thinking. You don't need to use all of these tactics in every training. Instead, think of this as a repertoire you can draw from based on the training's format, audience, and goal.

4. Curate scenarios

Scenario-based learning requires participants to make decisions in a simulated environment. The goal is verisimilitude: removing the risk, not the reality. You want a safe space for people to experiment and perhaps fail without those failures affecting the business.

That means the scenario has to be believable. Use the specific situations, decisions, and friction points you surfaced during discovery. Generic scenarios don't create real thinking, but nuance does. If a manager is navigating a performance conversation with a resistant employee, the employee needs to push back like a person would. If the conflict resolves too easily, then no one is learning.

5. Ask questions that require judgment

Depending on where you were educated, you may feel immense pressure to ask questions that are easily answered. You don't want people to be discouraged from raising their hand or responding to a prompt, so perhaps you start a session with softball questions (ones with a clear answer based on something you just said or something obvious).

Those have a place, especially for warm-ups or knowledge checks. But training should dig deeper by asking questions that have real tradeoffs. Consider a scenario built around a layoff decision: the business case is clear, but the human impact is real, the team dynamics are complicated, and the manager has incomplete information. A question like 'what would you do?' in that context forces people to weigh competing priorities.

When you're designing, think of the scenario as the container, and the tough questions or decisions inside as what make people think. Build for the pause, and embrace the silence. Even if someone always seems ready with their hand up

6. Let peers do the teaching

Some of the most effective training is designed around peers sharing knowledge. A facilitated conversation where you're guiding the room, but peers are doing the teaching, can be more powerful than any slide deck. Think lessons learned after a successful product launch, or structured sharing after a team returns from a conference.

Social learning scales across formats: breakout groups, paired discussions, and structured reflection in live sessions; discussion prompts and manager debriefs in async training.

When you design, ask yourself: where does peer experience belong here? Sometimes it's a moment. Sometimes it's the whole session.

Designing for thinking gets people engaged, but engagement alone doesn't change behavior. The final piece is making sure the training connects to how people work.

7. Do less, change more

Most training tries to accomplish too much, and as a result, changes nothing. It's hard to cut back: there's always more context to add, more ground to cover. But the discipline of narrowing scope is what makes training effective.

Identify one behavioral change you want to make with the training and design around it. Nothing else.

Say you work in manufacturing and run a monthly training series. This month, after speaking with team leads, you learn there's one issue that keeps coming up. It's simple, but its impact reverberates throughout operations. So you design a targeted five-minute training focused on that precise issue. That's it.

8. Personalize at scale

One of the hardest parts of learning design has always been personalization at scale. You may be designing for employees who share the same role but bring different industry experience, speak different languages, or have different cultural touch points. That means your examples need to adapt, and so do your assumptions about shared knowledge.

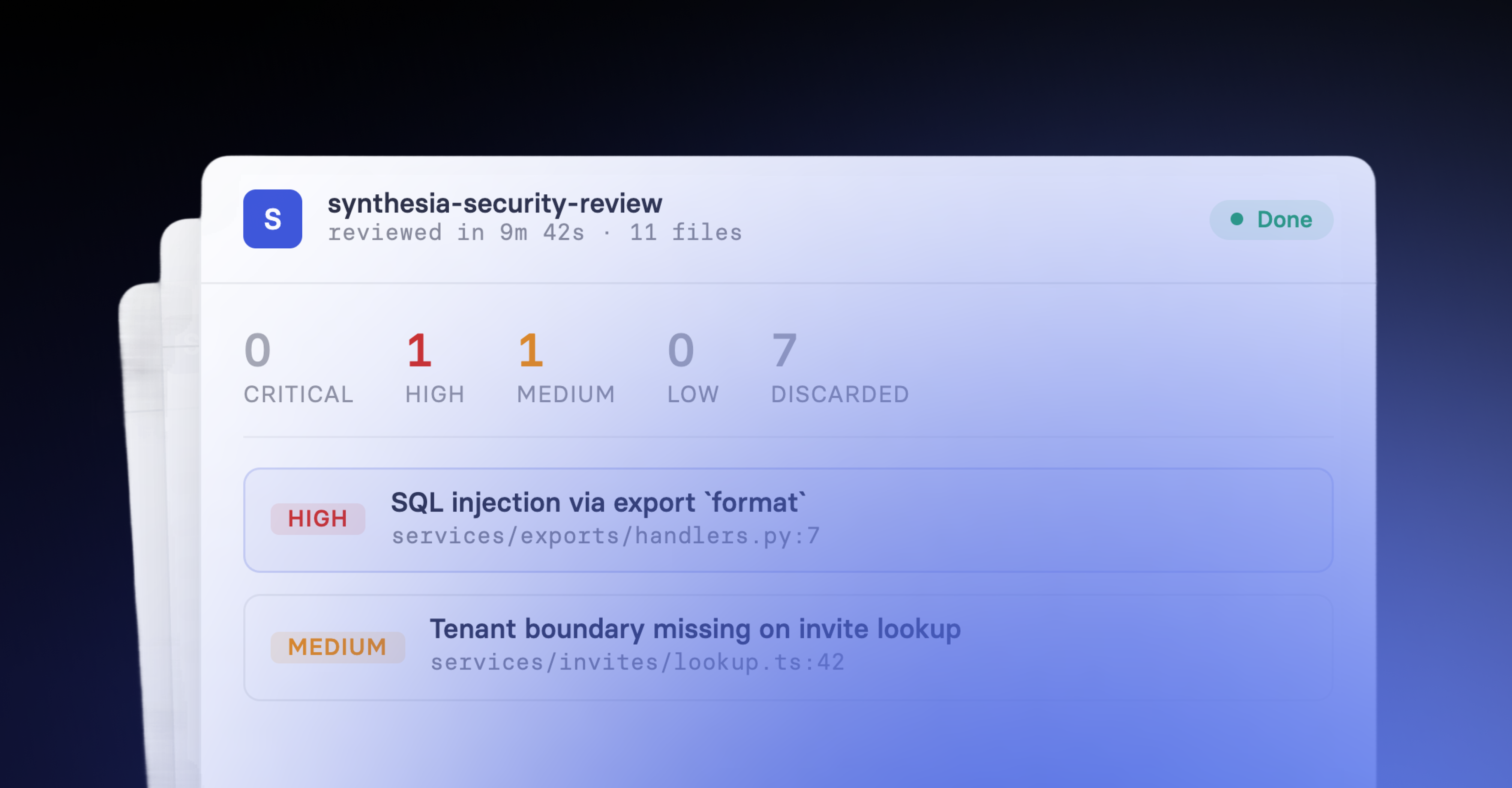

AI is making this more feasible. Localizing content no longer requires a third-party vendor, though a native speaker for quality review is still worth the investment. AI also makes it easier to identify patterns in how different audiences engage with content, so scenarios can be varied and adapted.

To illustrate what this can look like, consider Justdiggit, an environmental education organization working across Sub-Saharan Africa. They teach farmers and pastoralists how to capture rainwater and reduce erosion.

Initially, their approach relied on in-person trainers, which limited how far they could scale. To reach more people, they created training videos and localized them into Swahili using AI, making it possible for Tanzanian farmers to access the content directly on their smartphones.

As they expanded into Kenya, they adapted the language again to reflect regional dialects. The core training remained the same, but the delivery evolved to meet people where they work.

9. Deliver learning in the flow of work

Josh Bersin coined 'learning in the flow of work' in 2018 to describe employees getting the information they need, when they need it, without switching contexts. For that to work at scale, training has to be modular. That means short, focused assets that can be surfaced at the moment of need rather than consumed in one sitting.

40% of teams are already using AI search and knowledge assistants to deliver on this. With AI, the gap between training and application is closing.

Consider a new sales rep preparing for a difficult renewal conversation. Before the call, they search in natural language: 'how do I handle a customer who wants to cancel?' and get the right guidance immediately from their organization's vetted knowledge base. Whatever they need (a video, a course, a checklist), right when they need it.

When learning shows up where work happens, engagement stops being something you design around and starts being something that happens naturally. When learning is always accessible, people opt into it.

How to make training more engaging by use case

The tactics I shared work across delivery mediums and use cases. That being said, how you apply them varies based on the context.

And sometimes the engagement problem is the format. A quarterly sales enablement session might be well designed, but if it's scheduled in the middle of client meeting availability, people won't attend, and those who do are already half-distracted. So you redistribute the content as training videos or a short course they can go through between calls.

To illustrate, here are four common training scenarios mapped to a frequent engagement problem and how to address it

How to measure if training is engaging

Now that you've done all this work on your training, how do you measure if it's having the intended impact? How do you know if it's really more engaging?

I recommend creating a baseline first. What were you doing before, and how were you measuring it? Once you have that picture, you can compare it against what you're seeing after the changes. Depending on the tactics you're trying, here are some things to observe or look for in the data.

Attention

Early drop-off signals that the purpose or context isn't clear:

- Do people stay with the session or drift early?

- Do questions or participants drop off?

- In self-paced content, where do people skip or stop?

Thinking

Fast, uniform responses usually signal low effort. Variation and hesitation signal real thinking:

- Are responses immediate, or do people pause and consider?

- Do answers vary, or does everyone converge on the same obvious choice?

- In discussions, are people explaining their reasoning or just giving answers?

Application

If training is working, it shows up in how work gets done:

- Do people refer back to the material during real work?

- Do the same questions come up again after training?

- Do decisions become more consistent over time?

Across all formats, the question is the same: is the training changing how people think and act in their day-to-day work?

If you're unsure where to start, identify one place where the content you're delivering isn't meeting expectations. Redesign that moment using the tactics I've shared. Then see if your revisions change engagement based on your data or observations.

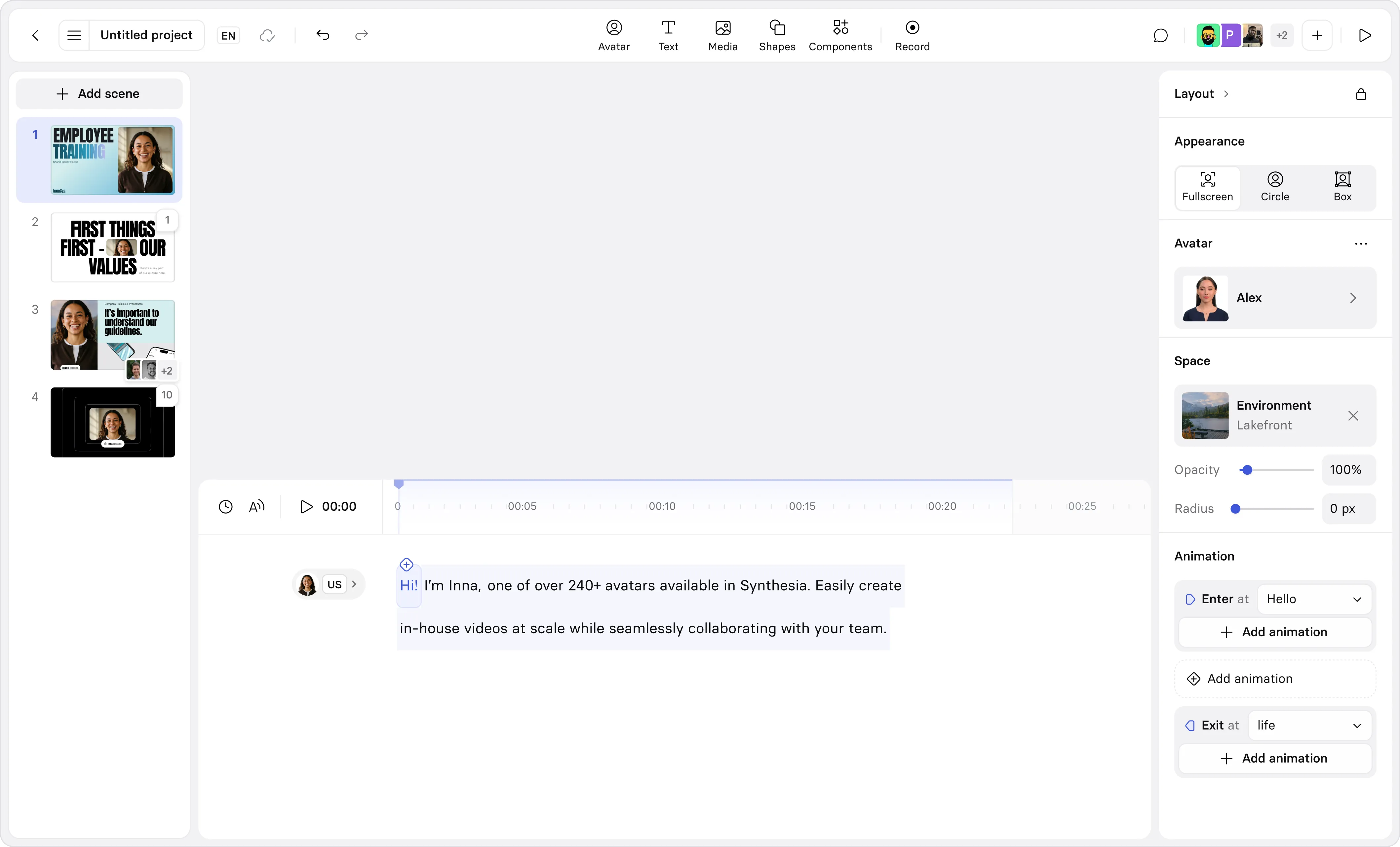

And if that redesign suggests video may be a better medium, try uploading your current content into our text to video tool or start with this template.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What makes training engaging?

Training is engaging when it earns attention, requires thinking, and supports application in real work. That means people understand why the training matters, are asked to make decisions or interpret situations, and can connect what they've learned to their day-to-day work.

Engagement comes from cognitive involvement. Moving through content or answering simple questions may create activity, but it doesn't guarantee learning.

Does interactivity improve training engagement?

Interactivity can improve engagement, but only when it increases the level of thinking required. A poll or branching scenario is effective when it asks people to make a real decision with real consequences.

When the correct answer is clear, interaction creates activity without deeper processing. The impact comes from the quality of the question, not whether an interaction exists.

How can I make compliance training more engaging?

Compliance training is often dull because companies outsource it to third-party vendors whose content meets regulatory requirements and little else. That's a problem, because compliance is where the stakes are highest for employee wellbeing, safety, and organizational security.

There is room to improve it even within regulatory constraints. Replace hypotheticals with scenarios drawn from real situations your workforce actually faces. The more specific the scenario, the more believable it is, and the more likely people are to engage with it. Chunking content into shorter segments and using storytelling to make consequences feel real will also help.

And if all else fails, turn it into a competition. Sometimes people need motivation to engage, and leaderboards or scenario-based scoring can provide that push.

How do you make virtual training more engaging?

Making virtual training more engaging requires more than polls and breakout rooms. In fact, forcing participation before psychological safety is established can work against you.

Start with your work culture. If your organization operates with cameras on as a norm, you can set that expectation in virtual training. If not, don't fight it. Focus instead on designing for engagement in ways that don't require it. A chat feature can surface opinions from people who wouldn't speak up in a group. Sharing materials in advance gives those who benefit from preparation time to come in ready.

The neuroscience is worth keeping in mind here. Stories synchronize brains, and engagement tightens that synchronization. That means the quality of your facilitation and the relevance of your scenarios matter more in a virtual environment, where you're competing with every other tab on someone's screen.

Consider fun breaks. Encouraging people to stand up and move isn't a gimmick — movement supports cognitive processing. If someone is listening while stretching or knitting, they're still engaged.

Where you do use breakout rooms, consider putting existing teams together rather than using random groups. Recruit people in advance to lead and report back.

And remember, a little humor and lightness go a long way in a virtual room.

Are training videos more engaging than slides or documents?

No format is inherently more engaging than another. A well-structured one-page SOP that explains what a process is, why it matters, and how to do it can be more engaging than a ten-minute video covering the same content. The assumption that video automatically wins isn't backed by evidence.

That said, video does specific things well. It helps establish context, shows how work gets done in practice, and is easy to revisit in short segments. Scenario-based videos in particular tend to be replayed before a task, which supports application and reinforces decisions over time.

The question worth asking is: what does this content need to do, and which format serves that best? That's a design decision.

How do you keep training engaging at scale?

Designing for engagement at scale requires a more focused approach to scenarios. Training often becomes generic when it tries to design for everyone and ends up designing for no one. Instead, consider repeatable formats that can be easily modified and localized for different teams, whether that's one region or ten.

The teams that sustain engagement treat training as a living system. That means assigning clear ownership, setting a review cadence, and rebuilding scenarios when they no longer reflect real decisions.

It also means being honest about format. A program that worked as a live session in one region may need to be restructured as modular async content to scale without losing what made it effective.

What is the 70/20/10 rule in training?

The 70/20/10 rule suggests that 70% of learning happens through on-the-job experience, 20% through social relationships, and 10% through formal training and coursework. It's widely cited in L&D circles, but it isn't research-backed.

The original source is self-reported data from executives collected by the Center for Creative Leadership in the 1980s. The research was designed to show that leadership can be learned over a career, which is a reasonable conclusion. The specific percentages, however, were never validated as a prescriptive framework.

Several assumptions embedded in the model are particularly problematic: that unstructured experiential learning automatically results in capability development, and that formal training alone leads to learning transfer without reinforcement or practice. Neither holds up.

What the research does support is that learning requires intention, design, and follow-through regardless of the delivery format.

How do you know if training is actually engaging?

The most reliable signal is what happens after the training ends. Are people applying what they learned in their work? Are the same questions coming up again, or have they stopped? Are decisions becoming more consistent over time?

During the training itself, look for signs of genuine cognitive engagement. In a live session, that might be the quality of responses, whether people pause before answering, or whether discussion goes somewhere unexpected. In async content, it's where people stop, rewatch, or skip.

Completion rates tell you people showed up. They don't tell you whether anyone was thinking.