Create engaging training videos in 160+ languages.

L&D is often asked to justify its existence. Leaders want proof in tighter budget cycles, and training effectiveness gets reduced to vanity metrics like completion rates and satisfaction scores.

The good news is you do not need to rely on anecdotes to show the impact of L&D. A strong body of research connects investment in development to engagement, retention, and performance. More importantly, it shows how learning builds organizational capability, which is what helps companies adapt when priorities change.

Whether you’re building an L&D function, defending an existing program, or aligning leaders on what to fund next, this guide is for you. It lays out a practical business case grounded in learning science and proven best practices, including personalized learning and learning in the flow of work.

📚 Learning and development (L&D) is the organizational function that builds employees’ knowledge, skills, and capabilities so the business can perform and adapt. It covers how learning is designed, delivered, supported on the job, and evaluated over time.

What does L&D need to deliver in 2026?

Enterprise learning is under pressure to keep up with an accelerating pace of change while budgets keep tightening. That combination makes training easy to dismiss unless it shows up as measurable performance.

What does “impact” mean in enterprise L&D now?

Impact means the business can execute change with less friction. People ramp faster. Work is more consistent across teams and regions. Avoidable errors drop, especially after new tools, new processes, or new policies roll out.

This definition fits the reality most organizations are planning for. The World Economic Forum’s Future of Jobs Report 2025 describes continued disruption through 2030, with employers expecting significant change to jobs and skills. In that environment, L&D is less about delivering courses and more about keeping capability current. Here are a few signals leaders recognize when development is working.

📚 Friction is any unnecessary obstacle that makes a task harder than it needs to be — extra steps, unclear handoffs, waiting, rework, or “workarounds” that drain time and slow the workflow.

Why isn’t “more training” the answer?

Because activity is not the same as performance. A 2025 meta-analysis finds a positive relationship between organizational training and organizational performance, but the strength of that relationship varies depending on what training targets and how performance is measured. Training can be well-produced and still miss the outcomes leaders care about if it is not tied to the right work and reinforced over time.

That’s why completion and satisfaction are weak as “proof.” They describe consumption. They do not confirm adoption, proficiency, or consistency.

What has to be true for learning to stick at scale?

Transfer has to be intentional. A 2026 systematic scoping review highlights a persistent challenge: evaluation tools for training transfer are widely used but remain fragmented and inconsistent. When transfer is hard to measure, it is harder to manage, and behavior change becomes guesswork.

Personalized learning works when it reduces effort and increases relevance. That means people get the right level of guidance for the task in front of them, based on role, context, and experience, without being pushed back into generic content. Personalization is less about building a unique course for every employee and more about matching support to the job.

Learning in the flow of work strengthens transfer because it shows up when decisions are made. People rarely fail because they forgot everything. They fail on the one step they can’t recall under pressure. Short guidance, examples, and refreshers delivered where work happens reduce cognitive load and make the right behavior easier to repeat until it becomes the standard.

📚 Personalization should narrow what someone sees (not expand it).

What should L&D leaders prioritize when budgets are tight?

Prioritize outcomes that reduce enterprise waste. Readiness for change is one. Adoption is another. Speed-to-update is the third. These three protect the business from the hidden costs of slow ramp, inconsistent execution, and outdated guidance.

This priority set also maps to how leading learning teams talk about value. LinkedIn’s 2025 Workplace Learning Report frames career development as a business strategy, not a perk, and pushes learning leaders toward measurement that connects development to retention, mobility, and business performance.

How does AI change the standard for what’s possible?

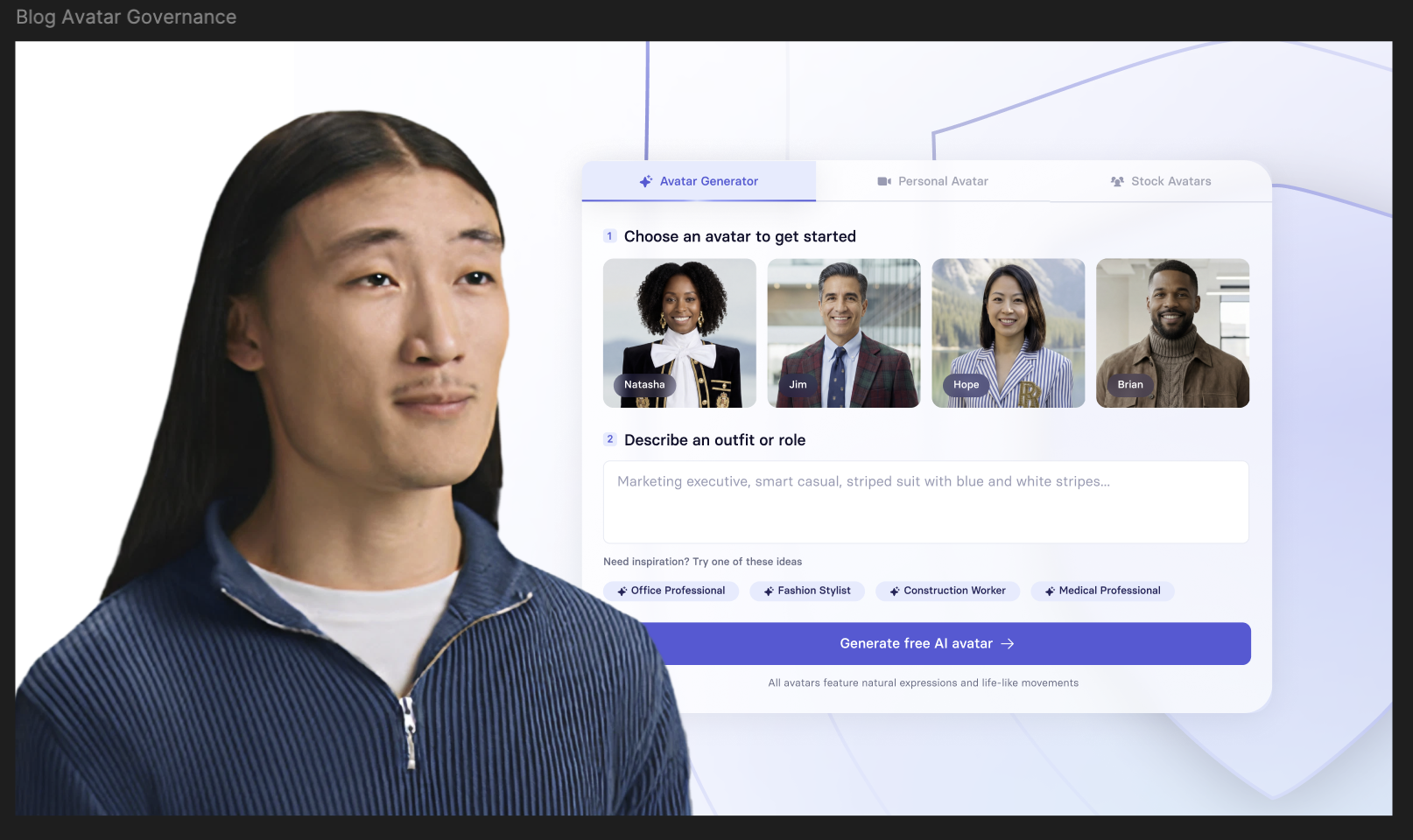

AI reduces the cost of producing, updating, and localizing learning. That changes what stakeholders expect. In Synthesia’s AI in Learning & Development Report 2026, teams report immediate value in time saved, and they also point toward growing value in localization and business impact.

Speed alone does not solve the hard part. The same research surfaces blockers like security and accuracy concerns, integration challenges, and unclear approval paths. Mature teams will use AI to keep learning current while tightening governance, not loosening it.

What outcomes should L&D prioritize in 2026?

These outcomes give leaders a clear way to judge impact and give L&D a practical way to design for scale. For each outcome below, you’ll see what to build, what to measure, where to start, and how AI video helps keep guidance consistent and current.

How do you prove impact, keep speed, and scale what works?

If you want L&D to earn trust, measurement has to support a business decision. Start by choosing one outcome the business already cares about. Define what “better” means in operational terms, and set a baseline so you can see movement over time.

Keep the system simple:

- Choose one primary outcome tied to the initiative.

- Define “better” in real work terms.

- Capture a baseline before launch.

- Schedule two check-ins: one early signal review and one later performance review.

- Lead with business metrics and use learning signals as supporting context.

- Use completion and satisfaction data to diagnose friction or clarity gaps.

- Document changes between versions so results stay interpretable.

Vanity metrics such as attendance, completion rates, or satisfaction/NPS can look strong while behavior stays the same. They reflect participation and sentiment. They do not show performance improvement.

Once you can show movement, the next challenge is keeping speed while maintaining trust. Faster production only helps when guidance is accurate, approved, and easy to access. That requires operating discipline:

- One accountable owner per asset

- Clear approval roles before publishing

- A single current version with visible version control

- Removal or redirection of outdated content

- Standardized localization from one source of truth

- A feedback loop where questions and exceptions trigger updates

At scale, most breakdowns are operational. Impact slows when ownership is unclear, standards vary across teams, reinforcement fades after launch, or metrics focus on activity instead of outcomes. Strengthen the operating system, and learning becomes something you can run reliably and improve over time.

Key Takeaways

- L&D pays off when it improves how work gets done. Anchor your strategy to outcomes leaders already recognize, then design your learning system to deliver those outcomes reliably across teams, regions, and change cycles.

- Start with one priority outcome tied to a business initiative. Define what “good” looks like in operational terms, establish a baseline, and show movement over time. Then scale what works with clear ownership, reinforcement after launch, and updates that keep guidance accurate as reality changes.

- Use workflows that support ownership, approvals, and versioning so faster production strengthens trust. A practical next step is to draft one short video for a high-impact initiative, publish it where work happens, and measure what changes on the job.

To see what that looks like, watch how you can create a video in minutes. Then try building your own with Synthesia’s text-to-video tool.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What’s the difference between training, enablement, and capability building?

Training builds knowledge and skill. Enablement removes friction so people can apply that skill in real work. Capability building is the system that keeps performance improving over time, even as tools, policies, and priorities change. When you invest only in training, you tend to measure completion. When you invest in capability, you see consistency in how work gets done.

Why do training programs fail to change behavior at scale?

Because learning often stops at understanding. People finish a course, then return to a job shaped by deadlines, habits, and local workarounds. If the training is not tied to a real workflow, if managers cannot coach it, or if the guidance is hard to find at the moment of need, behavior does not shift. The fix is usually fewer one-time events and more reinforcement that shows up where work happens, paired with content that stays current.

How do you reduce bottlenecked readiness when only a few experts can train everyone?

Treat experts as the source of truth, not the delivery channel. Capture what they know once, then turn it into reusable training that teams can access on demand. Standardize the explanation, the examples, and the expected outcomes so regions and functions do not diverge. This is where video earns its place. It preserves the expert’s clarity, scales across time zones, and is easier to update than repeating live sessions.

How do you build skills at scale without overloaded upskilling?

By designing for the workday you have, not the one you wish you had. Keep learning tied to real tasks, and keep the scope narrow enough that someone can use it the same day. Reduce “extra” training time by replacing long sessions with short modules that answer one question and remove one source of error. If people need the content again later, make it easy to return to the exact step they need.

What should enterprise L&D prioritize in 2026?

Prioritize outcomes that leadership can feel. Readiness for change matters because the organization will keep shifting. Adoption matters because new tools and processes do not pay off until behavior changes. Speed to update matters because stale guidance creates exceptions, rework, and risk. When you choose priorities through that lens, you can measure progress in time to proficiency, quality, cycle time, and policy adherence, not just course completions.

How can AI help without increasing risk?

AI helps most when it improves scale while keeping control. That means governed templates, approved language, and clear review ownership for anything that touches policy, legal, or technical accuracy. It also means tight access control and versioning so teams know what is current. Use AI to reduce repetitive work such as updates, localization, and role variations. Keep accountability with the humans who own the content.