Create engaging training videos in 160+ languages.

There’s plenty of advice on making employee training (including on this blog), but most of it skips the hard part: building a strategy that makes training stick.

This guide fixes that. We’ll focus on foundations: set up the infrastructure (even if you’ve already started), create feedback loops that keep training tied to real work, optimize for the business outcomes that matter, and scale deliberately as demand grows. Often the answer isn’t more content, tools, or headcount. It’s learning to deploy what you already have so training becomes a system that drives measurable impact.

1. Start with a skills architecture

If training isn’t tied to a shared definition of skills, it’s hard to prove progress. You can ship great content and still struggle to answer the questions leaders care about: What capability are we building? Who needs it? What does “proficient” look like? How will we know performance improved?

A skills architecture gives you that foundation. It’s a practical system that defines the skills that matter (core, functional, and role-specific), sets 2–3 proficiency levels with observable behaviors, maps required skills to roles and levels, and specifies what counts as evidence (rubrics, QA checks, work samples, observations). It also needs governance: a named owner, a change process, and a review cadence.

Skills mapping should read like this:

Skill → How you train it → How you practice → How you verify → What you measure.

👉 Start here: Pick one business-critical role and define 6–10 skills with 2–3 proficiency levels and one evidence method per skill.

2. Design intentional partnerships

Training stays relevant when you build reliable inputs from the teams closest to hiring, performance, and change. These partnerships aren’t about adding more approvers. They create signal loops so you can spot skill gaps early, adjust quickly, and keep learning tied to business needs.

A partnership model includes a small set of core partners (HRBP or People Partner, a functional leader, and an enablement lead — plus Talent Acquisition when hiring is a constraint). Run a simple signal loop: a 30-minute monthly review focused on what’s changing in work and where performance is slipping. Use a lightweight intake so requests are comparable (business driver, audience, skill gap, success metric, constraints). Then do a quarterly reset to re-rank priorities based on business impact and capacity.

Use partners to surface specific signals:

- Talent Acquisition:

Which skill gaps show up in hiring? What should we hire for vs. develop? - HRBPs / People Partners:

Where do performance conversations get stuck? What skills block progression? - Functional leaders:

What changed in the work? Where are errors, delays, or rework increasing? - Enablement leaders (Sales, CS, IT, Ops):

What do high performers do differently? What do new hires struggle with in the first 30–90 days?

👉 Start here: Set a 30-minute monthly signal review with one HRBP and one functional leader, using a one-page intake that captures business driver, skill gap, and success metric.

3. Set outcomes that matter

Training earns attention when it’s anchored to outcomes the business already recognizes and reviews. That means moving past “learning objectives” and defining performance outcomes instead: what people should do differently at work, and what should improve as a result.

Start with the business driver. Then set 2–3 outcomes you can track over time, using the same language leaders use to run the business (quality, speed, customer outcomes, risk, cost). Make the proof explicit: define what counts as evidence, capture the baseline before launch, and set a review rhythm so the program gets iterated as work changes.

👉 Start here: Write one business-driver sentence, then choose two performance outcomes and one evidence method for each before building any content.

4. Design for skill transfer

Training only matters when it shows up in real work. Skill transfer is the bridge between “people learned something” and “performance changed.” The work environment is part of the design: peer support, manager coaching, and organizational reinforcement determine whether new behaviors stick after the session ends.

Design for transfer by building job practice into the workflow (scenarios and tasks that mirror real tickets and decisions), pairing it with clear feedback (rubrics, examples, coaching prompts), reinforcing it after launch (spaced follow-ups, reminders, refreshers), and validating it with a lightweight proficiency check tied to actual work.

👉 Start here: Take one high-impact skill and add one practice scenario plus a simple rubric or manager checklist that proves proficiency.

5. Deliver learning in the flow of work

Most training fails at the handoff: the moment someone needs to apply it. People don’t forget because they’re careless. They forget because work is busy, systems are complex, and support isn’t available at the point of need.

Learning in the flow of work closes that gap by embedding guidance where decisions happen. When support sits inside the workflow, it reduces guesswork, makes “the standard” easier to follow, and turns training into day-to-day performance support.

Design it around four pieces: identify the moments of need (exceptions, handoffs, first-time tasks), build small in-flow assets (checklists, decision aids, short clips, templates), place them inside the tools and workflows people already use, and iterate based on real breakdown points and usage signals.

👉 Start here: Pick one error-prone workflow and publish a single in-flow asset (checklist, decision aid, or 60-second clip) inside the tool where the work happens.

6. Make managers your multiplier

Managers are the fastest path from “completed training” to “changed behavior.” They translate the standard into day-to-day expectations, create practice moments in real work, and reinforce what “good” looks like when things get messy. When managers coach to the same standard, training stops being an event and starts becoming performance.

Give managers a lightweight kit: define the 1–2 behaviors that signal success for the skill, provide three coaching prompts they can use in 1:1s or huddles, use a simple observation method (checklist or rubric) tied to real work, and identify the practice moments where managers can watch, coach, and confirm proficiency.

👉 Start here: Pick one high-impact skill and give managers a one-page kit (standard, 3 prompts, checklist) they can use in the next team meeting.

7. Treat content like a product

L&D can borrow a lot from product management. Product teams don’t ship once and move on. They assign ownership, manage versions, and release updates so customers trust what they’re using. Training should work the same way.

When content is managed like a product, it stays accurate through change, scales across teams, and improves over time. That’s an operating model challenge as much as a content challenge.

A product-style content model looks like this: one named owner per module, clear versioning (last updated + short change log), modular design so updates don’t trigger rebuilds, a predictable maintenance cadence plus an “urgent patch” path for policy changes, shared templates and standards for scripts/scenes/assessments/job aids, and a backlog driven by signals (QA findings, adoption issues, drop-off, stakeholder input). If you scale globally, bake in a localization workflow from the start: glossary, translation, and regional review.

👉 Start here: Pick one training “product,” assign a single owner, and add two basics: version + last-updated date, and a brief change log.

8. Prove impact with performance data

If you want training to function as a business lever, measure it like one. Completions and satisfaction scores tell you whether the experience landed. Performance data tells you whether capability improved.

Anchor each program to a clear business driver. Define the 2–3 outcomes that should move, using the same language leaders use to run the business (quality, cycle time, error rate, time to proficiency). Decide what on-the-job behavior will signal progress, capture the baseline before launch, and set a defined review point to decide whether to adjust, stop, or scale.

Training earns credibility when its results show up in the same dashboards as the work.

👉 Start here: Pick one program and define two performance metrics plus one on-the-job behavior signal you can measure from baseline through rollout.

9. Use AI to scale personalization

Personalization is where L&D can move from “one program for everyone” to targeted support that actually changes performance. The goal isn’t novelty. It’s precision at scale: the right practice, in the right sequence, for the right role and proficiency level. That shift is already happening. Teams expect AI to drive value most through improved learner experience and personalization, with growing planned use in areas like personalized pathways, assessments, and skills mapping.

Keep personalization grounded in work. Tailor by role, proficiency level, and context. Use simple adaptive logic (“if this gap shows up, then this comes next”), and prioritize practice that matches real workflows: scenarios, drills, and checks that mirror the job. To keep it safe and consistent, lock personalization to approved sources and terminology, keep human review in the loop, and manage versions like any other content. Then improve the recommendations using real signals — assessment results, QA patterns, manager checklists, and usage.

👉 Start here: Pick one role and split one learning path into two proficiency levels (foundational and proficient), then use AI to generate role-specific practice questions and scenarios for each level.

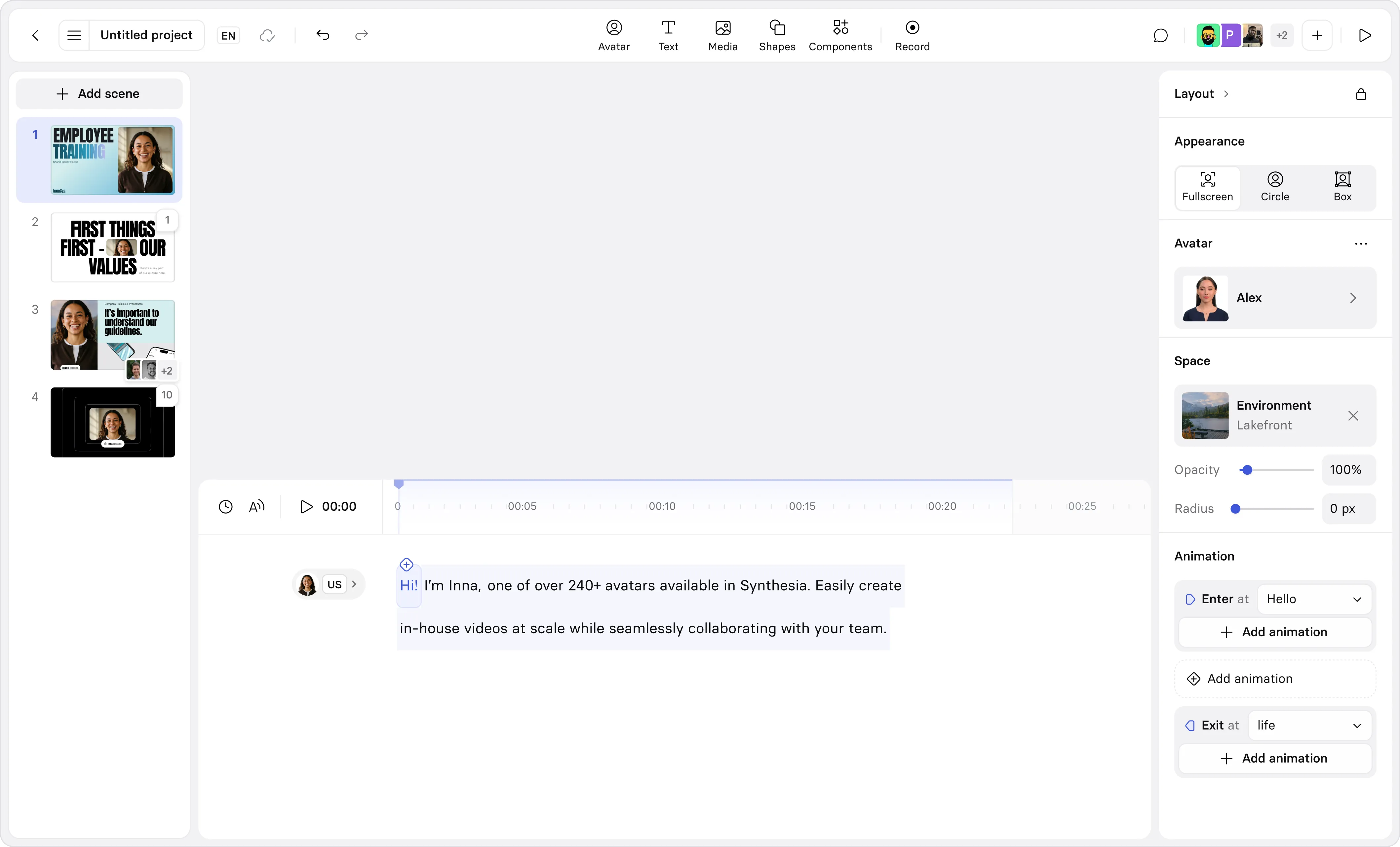

10. Scale globally with AI video

AI video training isn’t a replacement for in-person training, live problem solving, or the kind of relationship-building that happens when teams learn together. Those moments still matter, especially for leadership, strategy, and complex change. What has changed is scale. AI video makes it practical to deliver consistent training across regions, keep it current as work evolves, and reach people in the flow of work, in the language and context they need. That direction aligns with how leading organizations are rethinking development: less “leave work to go learn,” more learning embedded into how work gets done.

To make it work in practice, you need a clear global baseline for “what good looks like,” a template system so modules stay consistent, a localization workflow that includes glossary + translation + regional review, and an update model with versioning and a fast path for policy or process changes. Put a named owner behind it, with a clear approval path, so accuracy doesn’t drift over time.

👉 Start here: Use Synthesia’s text-to-video tool to turn one global onboarding script into a short AI video module.

Key Takeaways

The strongest employee training programs drive business impact by tying learning to skills, on-the-job performance, and progression — and then improving that system over time.

Build the infrastructure first. Stay close to change through tight partnerships. Design for transfer so new skills show up in real work. Measure impact with performance data. Scale deliberately: use AI to personalize practice and AI video to deliver consistent training across roles, regions, and languages.

If you want momentum fast, start narrow. Pick one role, one workflow, and one outcome you can measure. Ship a first version, learn from the signals, and expand from there.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What are L&D best practices for employee training?

L&D best practices are repeatable methods for building workforce capability at scale. The strongest programs start with skills and performance requirements, align learning to measurable outcomes, deliver practice-based experiences, keep content current through governance, and measure impact beyond completion rates. In 2026, many teams are also using AI to improve personalization and speed up iteration, so learning stays relevant as work changes.

How do I design experiential, scenario-based training that changes behavior?

Design around real decisions people make on the job. Use scenarios that mirror your workflows, include realistic constraints, and require learners to choose an action, see consequences, and try again. Add quick feedback loops (manager debriefs, peer review, or coached practice) so learners connect training to performance expectations.

What's the best way to keep training content up to date as processes evolve?

Treat training as a living asset with clear ownership and a refresh cadence. Use modular content (short videos and reusable building blocks), maintain templates for consistency, and set triggers for updates (process changes, policy changes, product releases). Review engagement and drop-off points using analytics, then prioritize updates where they will change outcomes fastest.

Which metrics should I track to measure training impact beyond completion rates?

Start with 2–3 business-linked measures that should change after training: time-to-proficiency, error rates, quality scores, customer outcomes, cycle time, or compliance exceptions. Pair those with behavior signals (assessment performance, scenario decisions, manager observation) and learner confidence. Use a structured evaluation approach during design and after launch so measurement supports iteration and impact.

How can AI video help us scale and localize training for global teams?

AI video helps teams ship consistent training faster, update content without reshoots, and localize for global audiences with far less overhead. That matters in 2026 because AI use in L&D is already common, and teams are focusing on personalization and scalable delivery. Use AI video to standardize core messages, create role-based variations, and refresh modules as processes change.