New security, privacy, and product policy updates for Synthesia 3.0

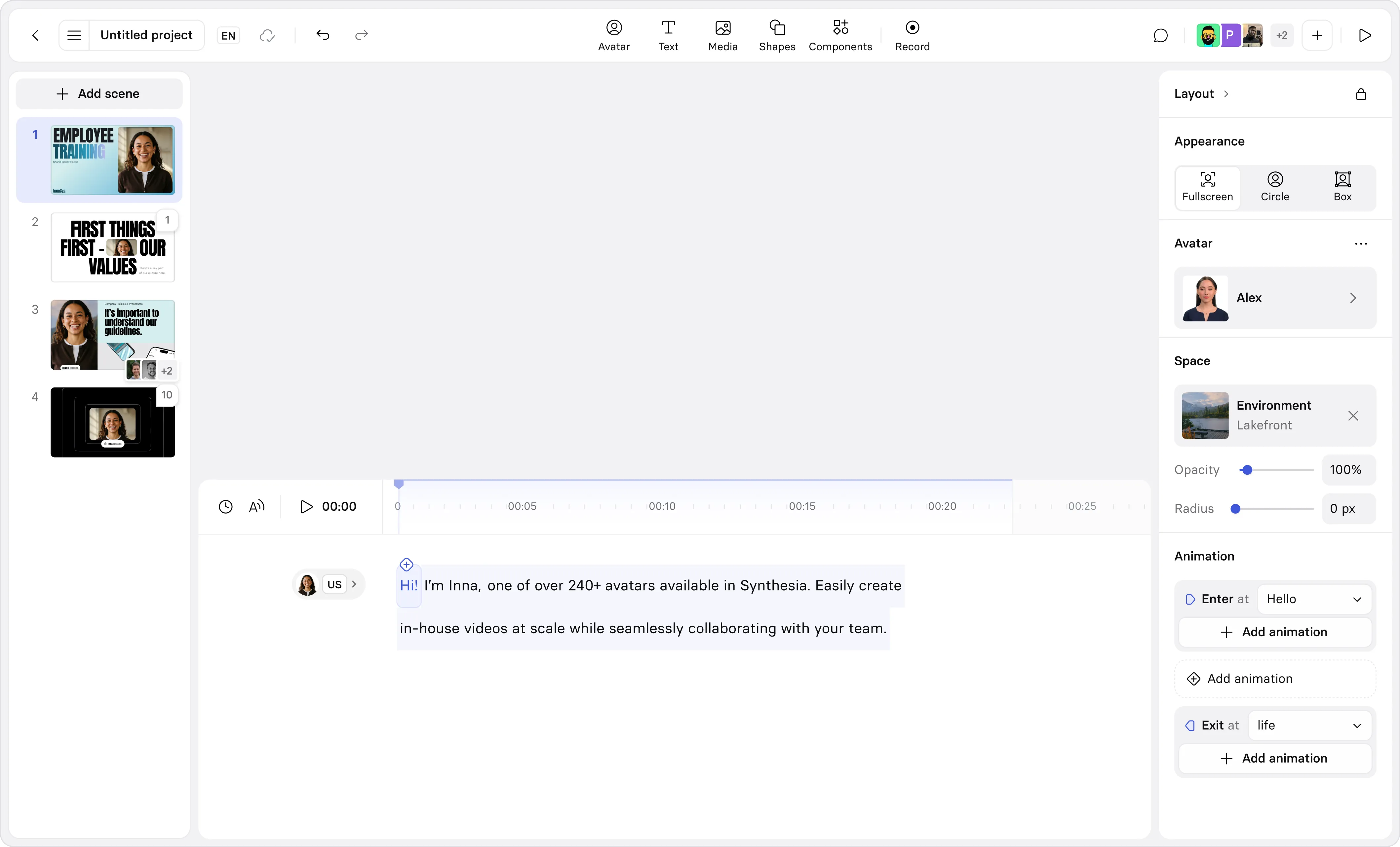

Create AI videos with 240+ avatars in 160+ languages.

Synthesia builds and sells enterprise AI software, and that starting point has always shaped how we think about security, privacy, and trust.

Consumer AI products are often designed for open-ended creativity at internet scale, with guardrails that have to work across a near-infinite range of contexts. By contrast, Synthesia helps people work better and organizations communicate at scale inside governed environments: employee training, sales enablement, customer support, internal comms. That means our approach to trust and safety is built around practical misuse scenarios that show up in real workflows, and around the controls enterprises expect in their software products.

We’re also an application-layer AI company rather than a research lab. Model developers have to reason about fundamental, frontier risks that sit at the base layer of capability. We have to work on addressing different issues: how people will try to bend a production system in the messy reality of business operations, and how to design enforcement, auditing, and user experience so that the platform remains safe without becoming unusable. That product reality is why we moderate content at the point of creation, before it’s generated or distributed.

Today, we’re sharing a set of policy, product, and operational updates that reflect how AI video, and the norms around it, are maturing as we develop Synthesia 3.0, a new version of our platform designed for more personalized and interactive video experiences.

A new milestone in enterprise AI assurance

Synthesia is now certified for ISO 27701, alongside ISO 42001 and ISO 27001.

In combination, these standards are a strong signal of enterprise-grade maturity across the full stack of trust: ISO 27001 validates an information security management system; ISO 27701 extends that foundation into privacy and the management of personally identifiable information; and ISO 42001 focuses on AI management systems: how AI is governed, monitored, and improved over time.

Together, they create a coherent assurance story for organizations adopting AI video at scale: security and privacy aren’t treated as add-ons, and AI-specific governance is formalized rather than improvised. We also continue to monitor the development of emerging standards and governance frameworks that support safe and responsible AI development and deployment, and we aim to lead by example (as we did by becoming the first generative AI company to achieve ISO 42001 certification), setting the bar for enterprise-ready AI governance. We are also aware that although certification formalizes our management systems, responsible AI also requires continuous product iteration, red teaming, and enforcement refinement.

What we’ve changed since the start of the year

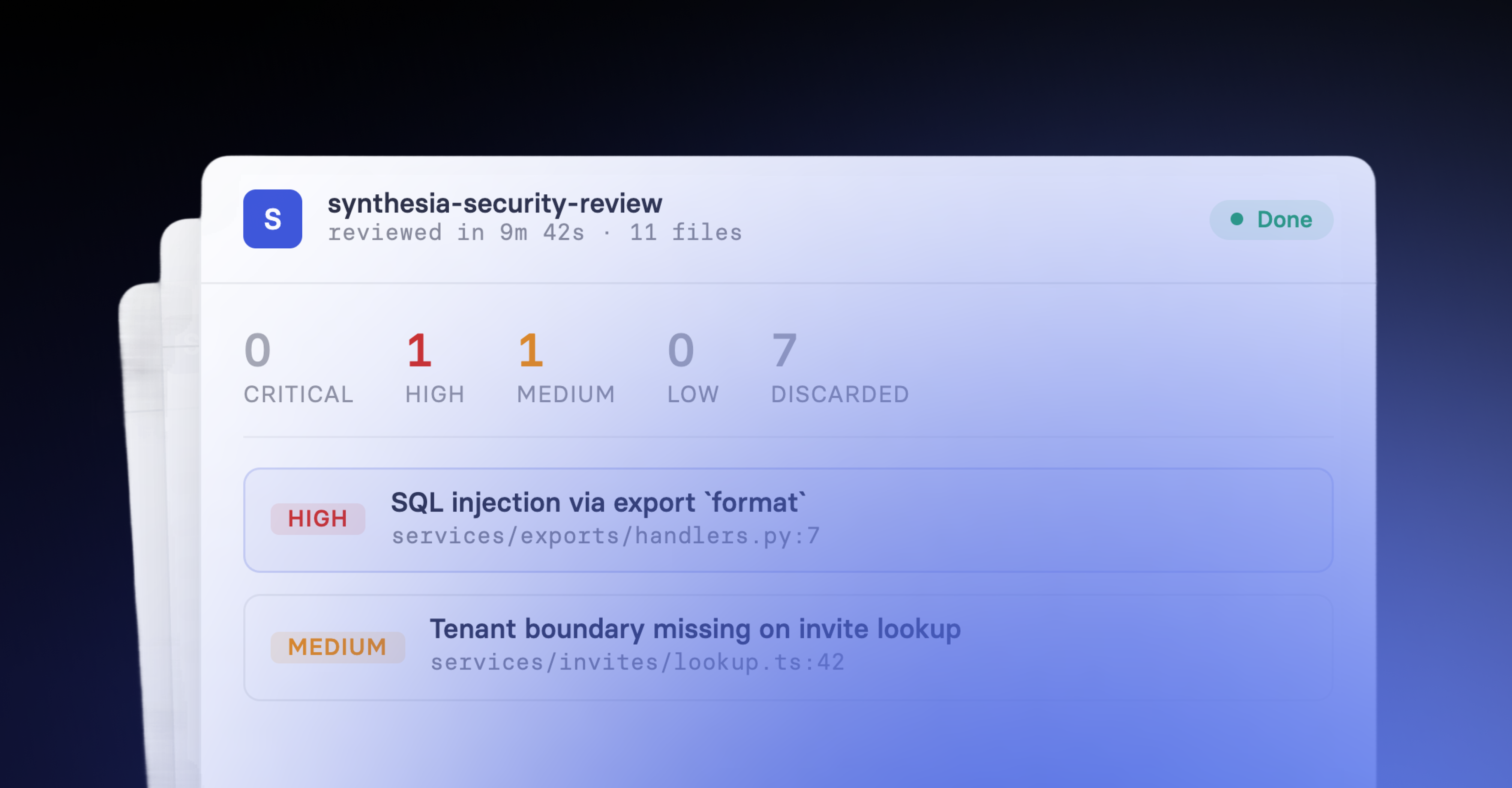

We’ve spent the last year refining the performance of our moderation systems and using appeals outcomes and red teaming insights to improve enforcement quality while minimizing disruption to legitimate enterprise use. These changes are enabled by better technology becoming available and by us investing more resources to improve the accuracy of our systems and the effectiveness of our teams.

One place this shows up immediately is content moderation, our first line of defense. When we first implemented automated safeguards a few years ago, we intentionally tuned them to be strict. AI video was still an emerging capability, the threat landscape was volatile, and we chose caution over convenience. Over time, that approach has created friction for some benign use cases: false positives where the intent and context were legitimate, but the system still over-enforced. Our direction now is to preserve early detection while reducing unnecessary disruption, and we’re working with third-party vendors to further improve the accuracy of our moderation systems and reduce false positives. This is also reflected in our broader trust and safety roadmap, which explicitly prioritizes reducing false positives at the point of creation.

We’ve also evolved how we think about Avatars, because the category itself is evolving. Synthesia now supports two types of Avatars:

- Stock Avatars based on paid actors or generated by Synthesia with AI. Stock Avatars follow a dedicated set of policies that are typically more restrictive in order to protect the likeness of the actors we work with.

- Custom Avatars based on the likeness of specific users who are real people or based on an AI-generated likeness that the user designs. Custom Avatars are governed by a more relaxed set of rules as they are based on the likeness created by a specific user .

We have completed dedicated red teaming work to limit the creation of harmful content while still giving businesses the flexibility to design Avatars that fit their needs. More broadly, our position is simple: while creative choice expands, our moderation standards remain consistent across Avatar types, and we continue to prohibit the creation of Avatars depicting celebrities, public figures, or deceased individuals.

Customizable Avatars are also a meaningful shift in how safety is implemented in practice throughout our platform. Unlike Avatars which are created from a real individual under explicit consent, AI-generated likenesses rely on an image model, an approach that expands creative flexibility but also changes where certain safeguards live. Because this creation flow relies on third-party AI models, we’re leaning more than we historically have on those providers’ own content moderation and abuse-prevention systems as an upstream layer of protection. In concrete terms, that means we’re increasingly dependent on model-level checks that detect and block attempts to generate non-consensual likenesses, alongside our own controls and red teaming work. We’re approaching this as a deliberate, layered design choice: stronger defenses earlier in the pipeline, tighter integration of vendor safety signals, and ongoing testing to ensure customizable Avatars remain a tool for legitimate enterprise use, not a pathway for non-consensual deepfakes.

Finally, we’ve made a change that’s less visible from the outside, but matters a lot to the day-to-day product experience: what happens when content fails moderation. Until recently, if a video was caught by our systems, the user could lose access to the entire video and its previous versions. That enforcement was too blunt, especially in cases where a user made an unintentional mistake or misunderstood how a policy applied to their context. Rejected videos now remain editable and can be resubmitted rather than being treated as deleted. When a video fails moderation, users can update the content and resubmit directly instead of starting over, and they retain access to prior versions that didn’t violate policy. This is the same principle we apply across our overall approach: enforcement should stop harm, but it should also help legitimate customers recover quickly and keep work moving.

Building the security layer that makes AI adoption faster

AI video is becoming part of the infrastructure of modern work. The organizations adopting it aren’t looking for novelty, they’re looking for reliability, governance, and speed without surprises. That’s why we invest in proactive safeguards at creation time, layered enforcement that combines automated systems with expert human oversight, and external validation that stands up to enterprise scrutiny.

We’ll keep strengthening the platform in ways that make responsible use the default and misuse harder over time, while continuing to treat enterprise-grade security, privacy, and trust as product features that accelerate enterprise adoption globally, responsibly, and at scale.

Alexandru Voica is Head of Corporate Affairs and Policy at Synthesia. He has experience across tech, social media, gaming, and retail, and an engineering background with a degree in Virtual Reality from Sant’Anna School.