Create AI videos with 240+ avatars in 160+ languages.

🎬 What are the best alternatives to Colossyan?

- Synthesia: Best for interactive training, enablement, and internal corporate communication

- Creatify: Best for UGC-style social ads and performance marketing videos

- HeyGen: Good quality, expressive avatars with fast rendering

- Camtasia: Built for screen-first editing workflows

- Elai: Offers super-fast video rendering

- D-ID: Focuses on converting photos into talking avatars

How I tested these Colossyan alternatives

I tested these AI avatar platforms using the same script in two languages to ensure consistent, side-by-side comparison.

Each platform was evaluated against Colossyan using identical inputs and similar workflows. On average, I spent about 1 hour testing each tool, covering avatar realism, lip-sync accuracy, localization quality, workflow experience, and overall stability.

How do these Colossyan alternatives compare?

1. Synthesia

URL: https://www.synthesia.io/

What is Synthesia?

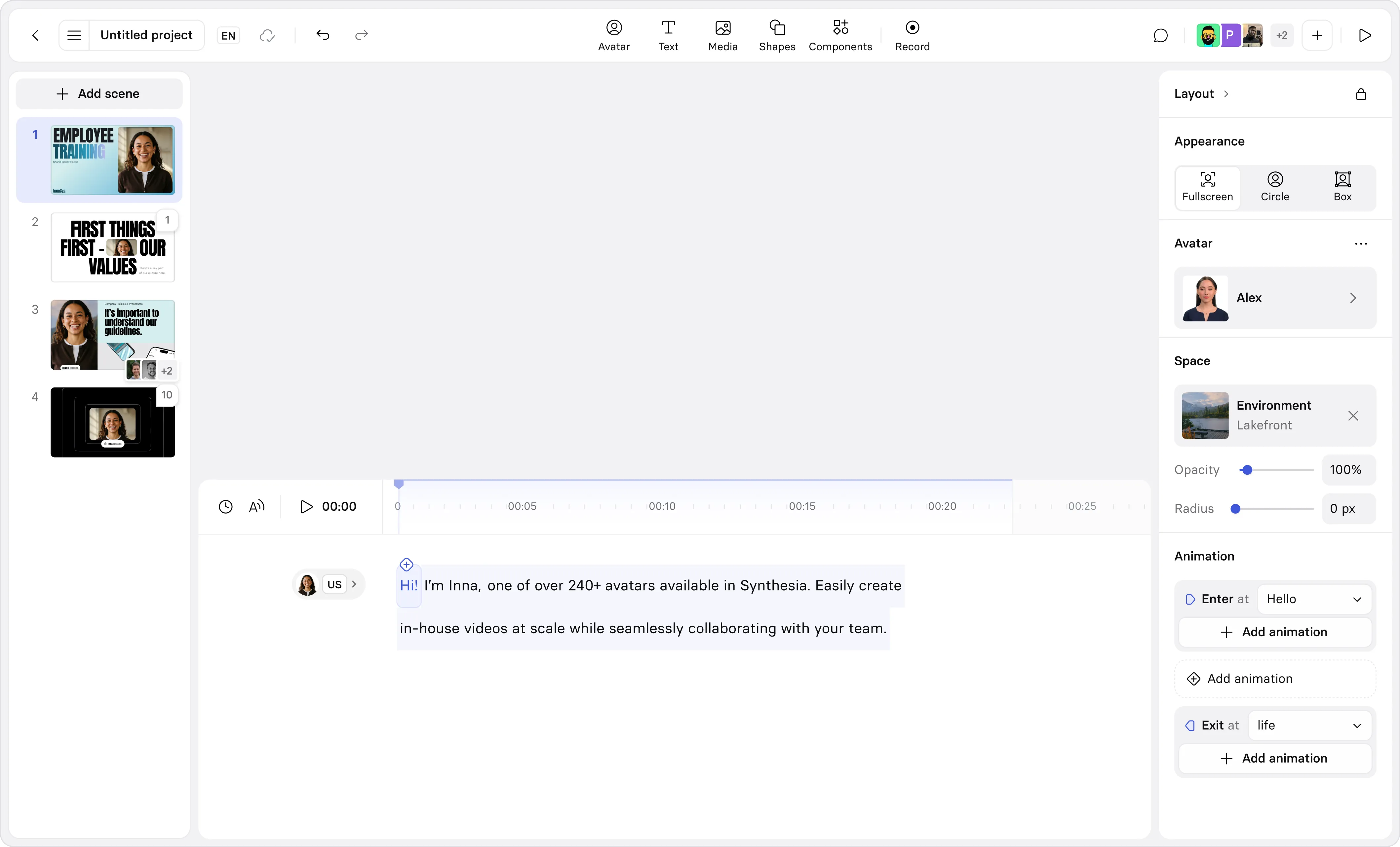

Synthesia is a script-driven AI avatar platform built specifically for structured, enterprise-grade video creation, with corporate training as one of its core use cases.

How realistic are Synthesia’s avatars?

In my hands-on testing, Synthesia’s avatars achieved very high realism. Facial micro-expressions, natural eye contact, subtle head movement, and coordinated hand gestures created a cohesive presenter presence. Lip-sync remained accurate and stable even in longer training scripts.

Compared with Colossyan’s more preset animation patterns, Synthesia’s avatars feel more fluid and dynamic, which makes extended training modules feel more natural and less repetitive.

How expressive and natural are the avatars?

Synthesia’s avatars deliver controlled, professional expressiveness. Gestures align naturally with speech pacing, and delivery feels like a confident trainer presenting material clearly and consistently.

Colossyan’s avatars work well for structured instruction, but motion feels more templated. In longer learning sessions, Synthesia’s broader gesture range and smoother transitions enhance engagement.

How good are the voices and lip-sync?

Voice quality in Synthesia was strong in my testing. Delivery felt natural, pacing was adjustable, and paragraph-level regeneration allowed precise refinements. Lip-sync was highly accurate, reinforcing realism during instructional delivery.

Colossyan’s voices are clear and instructional, but Synthesia’s broader voice ecosystem provides slightly richer cadence and tonal flexibility.

How strong is localization and multilingual support?

Synthesia excels in localization. It supports 160+ generation languages and 139 translation languages, with in-editor translation that preserves pacing and lip-sync.

Colossyan supports 80–100+ languages with translation and dubbing, but Synthesia’s multilingual workflow feels more seamless in practice, especially for scaling enterprise training across regions.

What use cases does Synthesia excel at?

Based on my experience, Synthesia performs exceptionally well in:

- Corporate training and onboarding

- Compliance and HR modules

- Sales enablement programs

- Internal communications

- Multilingual learning rollouts

- Interactive video-based training

- LMS-integrated course delivery

Training is one of Synthesia’s primary strengths.

What use cases does Synthesia struggle with?

Synthesia is less suited for:

- UGC-style social ads

- Highly stylized creative storytelling

- Rapid image-to-avatar micro-content

- Deep manual timeline editing workflows

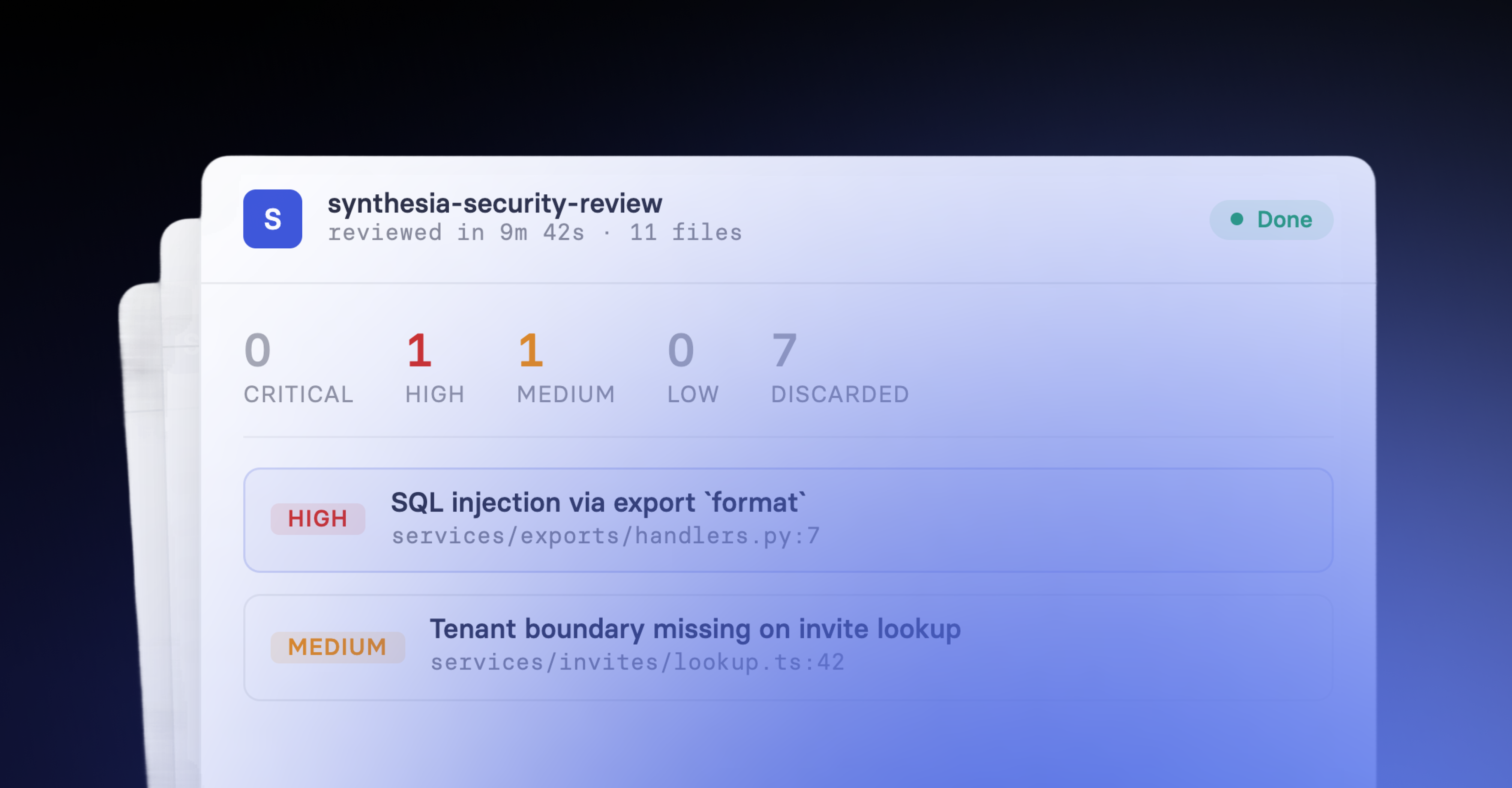

What are Synthesia’s strengths?

- Highly realistic avatars

- Strong interactive video features

- Robust LMS and SCORM export support

- Seamless multilingual translation

- Stable lip-sync and pacing control

- Enterprise permissions and publishing controls

What are Synthesia’s weaknesses?

- Does not work on Safari

- Rendering can be slower than some competitors

- Limited granular timeline editing

How does Synthesia compare to Colossyan?

Both platforms target training teams, but Synthesia feels more complete as a training solution.

Synthesia combines realistic presenters, interactive video features, and strong LMS/SCORM export capabilities in one integrated workflow. Its avatars feel more natural, and its multilingual rollout process is smoother.

Colossyan provides structured slide-based workflows and branching logic, but Synthesia matches or exceeds it on interactivity and LMS exports while delivering more fluid avatar realism.

If your priority is scalable, enterprise-ready training with polished presenters and strong LMS integration, Synthesia feels like the more mature option.

What is the verdict on Synthesia as a Colossyan alternative?

Synthesia is the stronger choice in many enterprise training scenarios.

It combines realistic avatars, robust interactivity, multilingual scaling, and SCORM/LMS readiness into a single workflow. For onboarding, compliance, enablement, and global corporate training, Synthesia delivers a more polished and comprehensive solution.

2. Creatify

URL: https://creatify.ai/

What is Creatify?

Creatify is a performance marketing–focused AI platform built specifically for UGC-style advertising videos.

The workflow is campaign-driven. Instead of building slide-based training modules, I input product details or a script, selected avatar styles, and generated multiple ad variations automatically. It’s optimized for speed, iteration, and conversion rather than formal instruction.

How realistic are Creatify’s avatars?

In my testing, Creatify’s avatars were impressively realistic for UGC-style content. Full-body motion, contextual gestures, and natural pacing gave them an influencer-style presence.

Compared to Colossyan’s more structured, presentation-style avatars, Creatify’s feel more dynamic and socially native. They are designed to capture attention rather than deliver formal instruction.

How expressive and natural are the avatars?

Expressiveness is a clear strength. I observed:

- Full-body movement

- Natural hand gestures

- Emotional presets (on supported avatars)

- Energetic delivery

Colossyan’s avatars feel more restrained and instructional. Creatify’s avatars are more animated and engagement-focused.

How good are the voices and lip-sync?

Lip-sync was stable in both English and Spanish tests. Creatify integrates multiple voice engines (including ElevenLabs), and voice quality varies depending on engine selection.

Compared to Colossyan’s neutral, instructional tone, Creatify’s higher-end voice options felt more energetic and marketing-oriented.

How strong is localization and multilingual support?

Creatify supports multilingual video output but does not include automatic translation. I had to prepare translated scripts manually.

Colossyan includes integrated in-editor translation, making it stronger for structured multilingual training workflows. Creatify can handle multilingual campaigns, but the process is less automated.

What use cases does Creatify excel at?

From my testing, Creatify performs best in:

- UGC-style social ads

- Performance marketing campaigns

- Product promotions

- Batch ad generation

- A/B testing creatives

What use cases does Creatify struggle with?

Creatify is less suited for:

- LMS-integrated training

- Structured compliance modules

- Interactive quizzes

- Long-form instructional content

Colossyan remains stronger for formal training environments.

What are Creatify’s strengths?

- Highly expressive UGC-style avatars

- Full-body motion

- Campaign-focused automation

- Batch creative generation

- Marketing analytics orientation

What are Creatify’s weaknesses?

- No automatic translation

- Limited structured training tools

- Not LMS-focused

- Credit-based pricing model

How does Creatify compare to Colossyan?

The difference in use case is clear: marketing vs. training.

Colossyan is built for structured learning, slide-based workflows, SCORM export, and interactive modules.

Creatify is built for ad performance and scalable UGC-style content.

If you need corporate training and compliance video, Colossyan is stronger. If you need high-converting marketing creatives, Creatify is the better alternative.

What is the verdict on Creatify as a Colossyan alternative?

Creatify is a strong alternative for teams focused on marketing and paid media, not structured learning.

It trades Colossyan’s LMS-ready features for expressive, conversion-oriented avatars and campaign automation, making it ideal for social and performance-driven content.

3. Heygen

URL: https://www.heygen.com/

What is HeyGen?

HeyGen is a script-to-video AI avatar platform built for expressive digital presenters and scalable video creation.

HeyGen’s workflow revolves around inputting your script, choosing an avatar, selecting voices and layout, and generating output with options for translation — all in one cohesive editor. It feels optimized for creating engaging, professional videos rather than slide-defined training modules.

How realistic are HeyGen’s avatars?

In my testing, HeyGen’s avatars delivered strong realism. The Avatar 4 engine provided natural micro-movements, subtle head shifts, fluid posture, and expressive facial cues that enhance believability. Lip-sync accuracy remained strong, even across longer scripts.

Compared to Colossyan’s more formal and preset animations, HeyGen’s output feels visually richer and more lifelike, offering a stronger on-screen presence that works well beyond strictly formal training content.

How expressive and natural are the avatars?

Expressiveness is among HeyGen’s standout features. During testing I observed:

- Micro shoulder and torso movement

- Context-aware gestures

- Natural blinking and head shifts

This contributes to a dynamic and natural feel that goes beyond formal presentation or training videos. In contrast, Colossyan’s avatars tend to have more controlled, predictable gestures suitable for instructional modules but less compelling for narrative or marketing content.

How good are the voices and lip-sync?

HeyGen’s voice ecosystem is extensive, featuring multiple engines like ElevenLabs and Panda. It also offers advanced controls like Direct Voice (describe tone/style), Mirror Voice (match your own delivery), and Auto-enhance for pacing and emotion.

In English and Spanish tests, the voices sounded natural and expressive, and lip-sync was highly accurate. Compared to Colossyan’s more neutral, instructional voice delivery, HeyGen’s offerings feel richer and more versatile.

How strong is localization and multilingual support?

HeyGen supports over 70 languages and 175 dialects, with robust dubbing workflows. In my Spanish localization test, speech pacing was preserved and lip-sync remained accurate.

Colossyan supports ~80–100+ languages with in-editor translation, which feels smoother than many competitors. However, HeyGen’s dialect support and expressive voice tools give it an edge in nuanced delivery across languages.

What use cases does HeyGen excel at?

From my experience, HeyGen performs best for:

- Marketing and brand storytelling

- Social media content

- Multilingual video campaigns

- Expressive professional presentations

What use cases does HeyGen struggle with?

HeyGen is less suited for:

- LMS-integrated SCORM workflows

- Structured slide-based training modules

- Interactive quizzes integrated into video

- Formal compliance content

It focuses more on expressive presenter content rather than tightly structured training systems.

What are HeyGen’s strengths?

- Highly expressive and natural avatars

- Fast rendering speeds

- Extensive voice customization

- Broad dialect and language support

- Business integrations (Zapier, HubSpot, etc.)

- Video Agent automation

What are HeyGen’s weaknesses?

- Translation workflow not fully one-click

- Premium features behind higher tiers

- Some lip texture artifacts on close inspection

How does HeyGen compare to Colossyan?

HeyGen and Colossyan serve different production priorities.

Colossyan focuses on structured, slide-based training and LMS-ready workflows with interactive elements like branching quizzes — ideal for HR and formal learning. HeyGen focuses on creating expressive, presenter-led videos that feel more alive and engaging, especially for marketing, internal comms, and narrative content.

In my testing, HeyGen’s avatars had stronger physical realism and richer voices, while Colossyan’s slide structure and LMS features offered advantages for compliance and education modules.

What is the verdict on HeyGen as a Colossyan alternative?

HeyGen is a compelling alternative to Colossyan for users who want expressive, high-quality AI presenter videos rather than slide-focused training modules.

It delivers more natural motion, broader language and dialect support, and richer voice options — making it ideal for communications, storytelling, and dynamic video use cases where avatar presence matters as much as structured content.

4. Camtasia

URL: https://www.techsmith.com/camtasia/

What is Camtasia?

Camtasia is a full-featured screen recording and post-production editor (desktop application, not cloud-based).

During testing, I used Camtasia primarily as a companion editing tool — importing AI avatars and clips, polishing timing, trimming segments, and adding captions or effects. There’s no native script-to-video avatar engine, but its editing environment is mature and professional.

How realistic are Camtasia’s avatars?

Camtasia itself doesn’t generate avatars. Realism entirely depends on the footage you bring into its timeline. In my testing, when I imported synthetic presenter clips from other tools (e.g., Synthesia or HeyGen), Camtasia preserved visual quality without degradation.

Because it doesn’t generate its own avatars, there’s no built-in realism metric — the output realism is as good as whatever source material is used.

How expressive and natural are the avatars?

Again, Camtasia doesn’t produce avatars or their motion natively. Instead, it offers timeline editing to:

- Adjust clip timing

- Add strategic cuts

- Introduce zooms or camera effects

- Sync multiple audio tracks

This editing control can make avatar performances feel more dynamic and natural in the final cut, even when the original footage lacks expressiveness.

Expressiveness in Camtasia is therefore a function of editorial craft, not AI motion synthesis.

How good are the voices and lip-sync?

Camtasia does not synthesize voices or align lip-sync natively. However, it integrates well with voice tracks you import from AI generators.

In my tests, AI-generated voices from other platforms maintained perfect sync when placed on Camtasia’s timeline. Camtasia’s audio tools (noise reduction, level balancing, compression) helped refine the output and improve clarity.

Lip-sync precision itself is a property of the source clip — Camtasia simply preserves it.

How strong is localization and multilingual support?

Camtasia doesn’t provide automatic translation or dubbing workflows. To localize content, you must:

- Generate translated voice tracks externally

- Import them into Camtasia

- Replace or layer them on the timeline

- Add or edit subtitles manually

This is a fully manual process, whereas Colossyan includes integrated multilingual output.

Camtasia’s strength is in flexible subtitle editing once you have translated assets.

What use cases does Camtasia excel at?

Based on my workflows, Camtasia performs best in:

- Screen recording with post-production refinement

- Editing hybrid videos (screen capture + synthetic avatars)

- Caption creation and cleanup

- Multitrack audio enhancement

- Final production mastering

What use cases does Camtasia struggle with?

Camtasia is less suited for:

- Native AI avatar generation

- Immediate script-to-video workflows

- Structured slide-based training creation

- Automatic translation or dubbing

- Quick repurposing without manual editing

It functions as a complementary editing tool rather than a standalone avatar solution.

What are Camtasia’s strengths?

- Professional timeline editing with precise controls

- Advanced audio tools (noise reduction, compression)

- Intuitive trimming and transitions

- Strong caption editing interface

- Reliable export workflows

- Excellent for hybrid content refinement

What are Camtasia’s weaknesses?

- No native AI avatar generator

- No built-in voice synthesis or lip-sync engine

- No linked translation/dubbing workflows

- Requires manual editing for localization

- Desktop install required (not cloud collaborative)

How does Camtasia compare to Colossyan?

Camtasia and Colossyan serve different stages of the video process.

Colossyan is a self-contained AI avatar video platform with integrated avatar generation, multilingual dubbing, and structured slide workflows — ideal for training and compliance modules.

Camtasia, in contrast, is a timeline editing environment that takes AI-generated assets and turns them into polished final productions. It doesn’t generate avatars but excels at refining and assembling them into seamless videos.

If you need automated creation and structured export — especially SCORM/LMS output — Colossyan is stronger. If you need fine-grained editing control and production polish, Camtasia’s editing environment is more powerful.

What is the verdict on Camtasia as a Colossyan alternative?

Camtasia is a strong alternative for users who want to elevate AI avatar footage with professional editing rather than create it from scratch in a slide-based system.

It doesn’t replace the easy automation that Colossyan provides, but it significantly enhances the creative and editorial quality of finished videos — making it ideal for teams looking to polish, refine, and produce broadcast-ready content from AI avatar sources.

5. Elai

URL: https://elai.io/

What is Elai?

Elai emphasizes automation-first video creation from documents, URLs, and PPT files. Its workflow takes structured content and converts it quickly into a video with minimal user effort — aiming for speed and efficiency rather than manual editing or deep customization.

The interface is straightforward: paste your content, let Elai auto-segment scenes, pick an avatar, choose a voice, and generate. It gets you to a finished video fast.

How realistic are Elai’s avatars?

In my testing, Elai’s avatars deliver functional realism. Lip-sync is accurate, facial motion is clean, and head and upper-body movements feel natural. However, avatars lack the richness and micro-expressive nuance seen in more advanced platforms.

Compared to Colossyan, Elai’s avatars feel slightly less engineered for formal training contexts — they are smoother than purely preset animations but not highly expressive.

Elai’s fast rendering contributes to output speed, but avatar detail feels more utilitarian than finely polished.

How expressive and natural are the avatars?

Expressiveness in Elai’s avatars is moderate. Movements are coherent and stable, but emotional nuance is limited. Gesture frequency is minimal, and facial variation tends to stay within a narrow range.

Compared with Colossyan’s structured formal motion, Elai feels more straightforward — a good fit for factual or instructional delivery, but not as engaging for narrative or emotionally rich content.

How good are the voices and lip-sync?

Lip-sync in my tests was technically accurate and stable throughout script length. Speech timing matched mouth movement well, and longer phrases did not exhibit drift.

Voice quality felt neutral and informational in tone — adequate for training or document conversion, but lacking depth or emotive variation.

Compared to Colossyan’s instructional voice tone, Elai’s delivery was similar in neutrality, making both tools capable for structured content but not ideal for expressive performance.

How strong is localization and multilingual support?

Elai supports 100+ languages and includes automatic translation within the platform. In my tests, the translation feature worked, though the UX felt less discoverable than some competitors and occasional credit errors appeared on the free plan.

Localization is effective, but the translation workflow requires more clicks and configuration compared with Colossyan’s smooth in-editor translation.

What use cases does Elai excel at?

Based on my testing, Elai performs best in:

- URL-to-video workflows

- PPTX-to-video conversion

- Document-to-video automation

- HR and learning content repurposing

- Rapid content generation at scale

What use cases does Elai struggle with?

Elai is less suited for:

- Highly expressive avatar-led storytelling

- Creative social content

- Nuanced emotional delivery

- Manual scene customization and cinematic control

It focuses on automation and speed rather than detailed production refinement.

What are Elai’s strengths?

- Very fast rendering

- Strong automation from documents, URLs, and PPTX

- Interactive video modules and clickable elements

- Selfie Avatar capture

- SCORM-ready export

What are Elai’s weaknesses?

- Avatar realism lacks expressive depth

- Translation UX feels buried

- Hair compositing issues in some clips

- Voice delivery is neutral and flat

- Reliability quirks at lower plans

How does Elai compare to Colossyan?

Elai and Colossyan offer different kinds of structural automation.

Colossyan is a slide-based corporate training platform with integrated LMS export, structured tutorials, and direct in-editor translation. Its workflow suits formal modules and compliance materials.

Elai is an automation-first video generator that rapidly turns documents and structured content into videos. It feels faster and simpler but sacrifices expressive avatar nuance and detailed customization.

If your priority is rapid automation of document content into video, Elai is efficient. If your priority is training-centric, structured slide modules with LMS integration, Colossyan offers more polished outputs.

What is the verdict on Elai as a Colossyan alternative?

Elai is a viable alternative to Colossyan if you need fast, automated video creation from documents rather than formal training modules.

It doesn’t provide the same level of structured training features or deep expressiveness, but it delivers quick turnaround and good automation — ideal for teams that prioritize speed and volume over formal structuring.

6. D-ID

What is D-ID?

D-ID is a lightweight AI avatar generator built around turning still images into talking-head videos.

The workflow is simple: upload or select a face, input a script, choose a voice, and generate. It’s minimal compared to Colossyan’s scene-based editor and interactive features.

How realistic are D-ID’s avatars?

D-ID’s realism is heavily dependent on the input image. Lip-sync was generally accurate, but upper-body movement is limited since avatars are primarily head-and-shoulders animations.

Compared to Colossyan’s structured avatar scenes with more defined posture and gesture patterns, D-ID feels more static and less fully composed.

How expressive and natural are the avatars?

Expressiveness is limited. Head motion and blinking are present, but gesture depth is minimal. Emotional nuance is constrained by the single-image animation approach.

Colossyan’s avatars, while not highly expressive, feel more structured and stable in training scenarios.

How good are the voices and lip-sync?

Lip-sync in my tests was accurate, and voice quality was serviceable. However, voice delivery felt relatively neutral, and performance depth was limited.

Compared to Colossyan’s structured instructional voice tone, D-ID’s output feels more basic and less optimized for formal learning contexts.

How strong is localization and multilingual support?

D-ID supports multiple languages via TTS, but there is no integrated slide-based translation workflow.

Colossyan’s in-editor translation and structured multilingual pipeline are stronger for enterprise training use cases.

What use cases does D-ID excel at?

From my testing, D-ID performs best in:

- Quick talking-head video generation

- Turning photos into speaking avatars

- Lightweight explainer clips

- Simple social or website content

What use cases does D-ID struggle with?

D-ID is less suited for:

- LMS-integrated training

- Structured slide-based content

- Interactive quizzes

- Multi-scene learning modules

- Multi-avatar dialogue scenes

Colossyan is significantly stronger in structured learning scenarios.

What are D-ID’s strengths?

- Fast generation

- Simple workflow

- Image-to-avatar functionality

- Lightweight interface

- Good for quick presenter clips

What are D-ID’s weaknesses?

- Limited expressiveness

- Minimal scene control

- No structured training workflow

- No interactive elements

- Not LMS-focused

How does D-ID compare to Colossyan?

D-ID and Colossyan serve very different needs.

Colossyan is a structured training platform with slide workflows, translation, and interactive export.

D-ID is a lightweight talking-head generator optimized for speed and simplicity.

If you need formal training content with LMS export and structured modules, Colossyan is stronger. If you need fast, simple talking-head videos from a still image, D-ID is the more straightforward alternative.

What is the verdict on D-ID as a Colossyan alternative?

D-ID is a viable alternative only if your priority is speed and simplicity, not structured learning.

It trades Colossyan’s training capabilities for quick avatar generation, making it suitable for lightweight explainer content but not enterprise education workflows.

Kyle Odefey is a London-based filmmaker and Video Editor at Synthesia. His content has reached millions across TikTok, LinkedIn, and YouTube, even inspiring an SNL sketch, and has been featured by CNBC, BBC, Forbes, and MIT Technology Review.

Frequently asked questions

How does Synthesia compare to other AI video tools for interactive training and LMS/SCORM export?

Synthesia stands out for interactive training through its comprehensive suite of features designed specifically for corporate learning environments. The platform offers built-in interactivity elements like quizzes and branching scenarios, combined with native SCORM export capabilities that ensure seamless integration with your existing LMS. Unlike many AI video platforms that treat training as an afterthought, Synthesia provides detailed analytics dashboards to track learner engagement and progress, making it easier to measure training effectiveness and iterate on content.

The multilingual video player sets Synthesia apart by automatically serving content in the viewer's preferred language without requiring separate video versions. This capability, combined with support for 160+ languages and 139 translation languages, enables global organizations to deliver consistent training experiences across all regions while maintaining a single source of truth for their content. These features transform video-based training from a static experience into an engaging, measurable learning journey that scales with your organization.

Can I try Synthesia for free before switching from my current tool?

Synthesia offers a free AI video generator that allows you to create and experience the platform's capabilities without any financial commitment or credit card requirement. This free option lets you test core features including avatar quality, voice synthesis, and the editing interface, giving you hands-on experience with how the platform works for your specific training needs. You can create up to 3 minutes of video content monthly, which provides enough capacity to evaluate whether the platform meets your requirements for avatar realism, ease of use, and output quality.

The free trial experience includes access to 9 AI avatars and 2 stock avatars across 140+ languages, allowing you to test localization capabilities and see how content performs in different languages. This risk-free approach ensures you can thoroughly evaluate how Synthesia compares to your current solution before making any migration decisions. By testing with your actual training content and workflows, you can make an informed choice about whether the platform's features align with your organization's video creation and training delivery needs.