Create AI videos with 240+ avatars in 160+ languages.

What are the best alternatives to HeyGen?

- Synthesia: Best for interactive training, enablement, and internal corporate communication

- Creatify: Best for UGC-style social ads and performance marketing videos

- AI Studios: Allows you to manually control avatar gestures

- Veed: Strong timeline editing and content repurposing for social teams

- Elai: Offers super-fast video rendering

- Colossyan: Built mainly for the training use case

HeyGen is a popular AI avatar generator that offers expressive avatars that can give natural performances with strong lip sync. It's most commonly used for short marketing or branded videos and can be used to generate polished avatar videos quickly and easily.

However, if you're using it for longer video projects, that can expose reliability issues including stuck renders and timeline glitches. Other common user complaints include unclear pricing and unhelpful support.

As a result, it might be worth exploring alternatives.

Here are the best HeyGen alternatives, I've tried to flag each alternative's specific selling point and which use case they are best suited to.

How I tested these HeyGen alternatives

I tested each of these AI avatar platforms using exactly the same script in both English and Spanish in order to ensure a fair and consistent comparison.

I evaluated each platform both on its own and specifically against HeyGen. On average I spent about 1 hour testing each tool.

I tried to focus on the criteria that are most important to people when they are working with AI avatars, which in my opinion is avatar realism, lip sync accuracy, localization quality, overall stability, and how nice the platform is to use.

HeyGen alternatives compared

1. Synthesia

URL: https://www.synthesia.io/

Quick summary

- Avatar realism: High realism

- Lip-sync accuracy: High accuracy

- Voice quality: High

- Best use case: Training, enablement, and enterprise comms

- Biggest limitation: Slower rendering

My experience

Pros

As you can see in my test video above, Synthesia's AI avatars have a very high level of realism. The combination of natural facial expressions, subtle head and hand movements, and even interesting micro-expressions all result in a convincing avatar performance.

I think the lip sync looks very accurate and seemed to be stable across multiple video generations and even when I tested out sentences that were on the longer side.

The Synthesia avatar library has more than 240 stock avatars to choose from, many of which you can customize (i.e. change their clothes or the background).

In terms of custom avatars, the platform offers the ability to generate synthetic avatars based on a prompt, or you can make an avatar that looks like you or someone else (with their permission via a recorded consent video) using an image, a webcam recording, or by booking a studio session.

Synthesia's AI voices sound super natural and use a pacing and intonation that actually sounds like how people speak in real life. I only tested English and Spanish dialogue, but the platform offers a much wider range of over 160 languages along with the ability to clone your voice.

There is an option to do a one-click translation of any video made within Synthesia. For translation of existing videos (made outside of Synthesia) the platform also offers AI dubbing.

Synthesia offers two main routes for creating a video. You can upload a document, paste a URL, type a script, or input a prompt to create a video via the Assistant feature, which allows you to get a quick start and then iterate on your video via a chatbot.

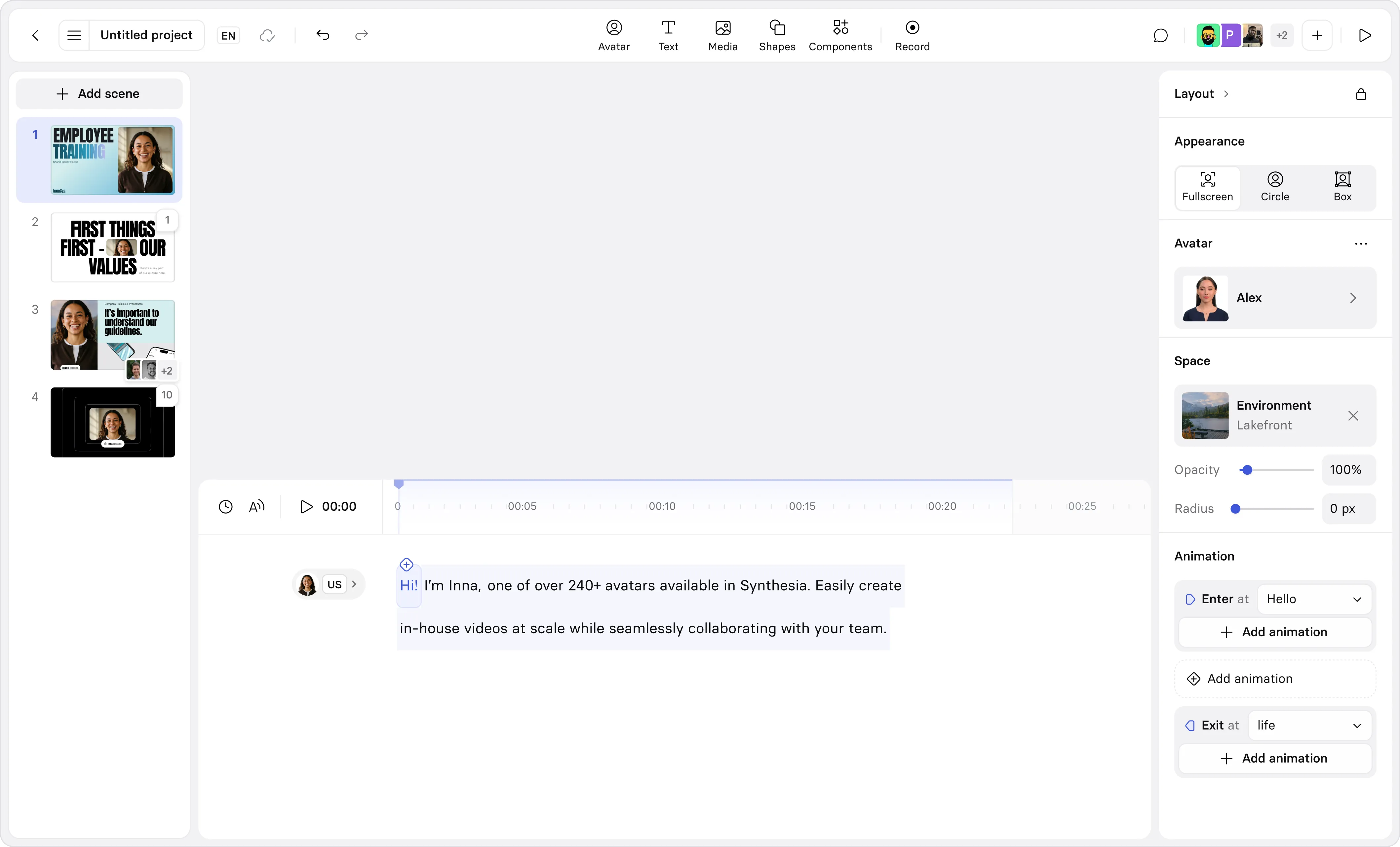

The other option is to use Synthesia's video editor, which feels a lot like PowerPoint since it uses a similar slide-based layout. While the editor is script-based, there's also a timeline view. Regardless of which route you choose, you'll probably end up using one of the many video templates which help give your video structure and a nice design that you can easily adjust to match any brand kit.

It's worth also mentioning Synthesia's AI Playground, which gives you free access to AI video generation models like Veo 3 and Sora 2, as well as image generation models like FLUX.2. You can then download these assets or use them as B-roll in your avatar-driven videos.

One really cool feature is the ability to 'direct' your avatar to take actions in scenes that you prompt, which really helps to widen the range of videos that you can make with AI avatars. It moves the whole format beyond 'talking head' videos and towards something more dynamic.

Synthesia supports interactivity with options to add knowledge checks, clickable buttons, and branching scenarios to your video.

Cons

I think the most obvious disadvantage of Synthesia is that the avatar expressiveness feels quite controlled. This makes sense for Synthesia's target use cases, which tend to be very enterprise-focused (e.g. training, enablement, and corporate communications).

However, if you want to generate avatar-driven videos for less formal use cases, such as UGC-style or product-driven avatar videos for ads on social media, then Synthesia isn't a great fit.

Another downside with Synthesia is that it only supports Chrome and Microsoft Edge - so there is no Safari support. I use Safari as my default browser on my Mac, so this was a bit of a pain.

Lastly, I'd flag Synthesia's video rendering times as being slightly longer than most of the other options on this list.

My verdict

If you are using AI avatars for training, enablement, or internal comms in an enterprise context and value consistency, control, and multilingual delivery, then Synthesia is probably the best option.

However, it’s probably not the best option if you are looking for less structured social media-style AI avatar video content for performance marketing workflows.

How does Synthesia compare to HeyGen?

I'd say that Synthesia's avatar realism is slightly better than HeyGen's based on the test videos - especially the hand movement, but you can look at them yourself and decide. I think the Synthesia voices have more natural intonation too.

Synthesia is typically favored for enterprise and training use cases (it's used by over 90% of the Fortune 100), since it offers more enterprise-ready features such as stronger collaboration and review tools, more analytics and assessment controls, and generally stronger compliance and governance features.

HeyGen is a good fit for generating high-quality avatar videos for general marketing purposes.

One big area of overlap is that both platforms offer comprehensive AI video translation tools.

2. Creatify

URL: https://creatify.ai/

Quick summary

- Avatar realism: High realism

- Lip-sync accuracy: High accuracy

- Voice quality: High

- Best use case: UGC-style performance ads

- Biggest limitation: No automatic translation

My experience

Pros

I really liked the UGC-style avatars that I was able to generate with Creatify. In my test video above you can see that the level of avatar realism is super high with really solid lip sync.

I also found Creatify's avatars to have more emotion than most of the other main avatar platforms, with changes in intonation and facial expressions that work really well for UGC-style performance marketing ads.

The platform's avatars offer full-body expressiveness and more than 20 emotional presets on a selection of the avatar library. In my opinion all these features add up to create avatar videos that are borderline indistinguishable from the UGC video content that you would find on any social media network.

I tested generating my video in Spanish and the avatar performance was still very high quality, so my impression was that Creatify would also be great for generating video ads in other languages too. The rendering of the Spanish version of my video was also super quick.

Creatify offers a streamlined experience for generating your own custom avatars similar to how you would in Synthesia - you can upload a photo and some audio and generate a really strong avatar in your likeness.

The platform offers a lot of features specifically built for the performance ad use case, including tools for batch ad creation, built-in A/B testing tools, AI music generation for your ads, and pretty comprehensive ad analytics (to track metrics like ROAS, CTR, and others). It even lets you publish your Creatify-generated ads directly to the social networks of your choice (e.g. Meta, TikTok, etc).

Cons

Creatify is a fantastic platform, but if you're not using avatars for the specific UGC video-style performance marketing ad use case, then it doesn't make much sense to use it. The platform contains a lot of features and complexity that you don't need and the avatars are also very much geared to that use case with a vertical UGC ad style that really isn't suitable for structured corporate communication or longer-form video use cases.

My verdict

I'd choose Creatify if I want to make and test high volumes of UGC-style AI avatar video ads across multiple ad platforms. I wouldn't use Creatify for corporate L&D, structured training content, or long-form multilingual video production.

How does Creatify compare to HeyGen?

I'd say that Creatify's avatar realism is slightly better than HeyGen's, although it has a specific style that suits certain use cases, whereas HeyGen's is more general purpose.

Creatify is the obvious choice if you want to use AI avatars for UGC-style performance marketing ads on social media. The whole Creatify platform is geared to that use case in a way that HeyGen isn't.

The avatars have a UGC-style realism and the platform offers a variety of tools to help you create, test, launch, and scale ads across multiple social networks that HeyGen can't match.

3. AI Studios

URL: https://www.aistudios.com/

Quick summary

- Avatar realism: Medium realism

- Lip-sync accuracy: Low accuracy

- Voice quality: Low

- Best use case: Social, product, and explainer videos

- Biggest limitation: Avatar realism is weaker

My experience

Pros

AI Studios offers a wide variety of AI tools in one platform, including AI avatars, AI dubbing, access to a range of AI video models (like Sora, Veo, and Kling), AI image generation, and deepfake detection. If you work a lot with AI video and need a number of these tools, then AI Studios offers pretty good value.

If you're interested in AI dubbing you should check out my roundup of the best AI video translators where I take a look at AI Studios and other AI dubbing tools.

AI Studios has a huge library of over 2000 stock avatars, as well as options for studio, photo, product and 3D avatars. The platform also supports multi-avatar scenes, and has an interesting manual gesture scripting feature which gives you a lot more control over avatar behavior than the other platforms on this list.

Like Synthesia and HeyGen, there's a variety of input flows that you can use to create a video using existing content, including script-to-avatar, URL-to-video, and doc-to-video flows. The AI Studios flows don't produce as polished results as those in Synthesia, but they still produce a robust output quickly and easily.

Cons

My biggest issue with AI Studios is that the avatar realism just isn't up to the level of the top-tier avatar generators. If you look at my test video above you'll spot a slight artificiality in the eyes which I think is clearly visible when you look closely. There's also quite a few moments in the test video where the lip sync is delayed which ruins the immersion for me.

The free AI voices used by AI Studios are very low quality, although if you upgrade to the more expensive tiers you will then get access to higher-quality ElevenLabs voices.

The AI Studios platform is also more complex than the others on this list, but I put this mostly down to the wide variety of functionality that it offers.

My verdict

I think AI Studios is a decent option for generating AI avatars. While the realism doesn't quite match the top-tier avatar generators, I think the realism is good enough for a wide variety of use cases, with the caveat that you should definitely upgrade to a paid plan with better voices.

How does AI Studios compare to HeyGen?

I think HeyGen's avatars offer a higher level of realism with more emotional fluidity, superior lip sync, and better-quality voices than AI Studios.

AI Studios offers a much bigger stock avatar library, a unique manual gesture scripting feature, and a broader variety of integrated AI video tools to play around with.

I'd probably stick with HeyGen over AI Studios for most avatar video use cases unless I specifically needed one of the other many AI video functionalities that come bundled together in the AI Studios platform.

4. Veed

URL: https://www.veed.io/

Quick summary

- Avatar realism: Medium realism

- Lip-sync accuracy: Medium accuracy

- Voice quality: Average

- Best use case: Video projects that need both editing and avatars

- Biggest limitation: Strange avatar movements

My experience

Pros

Veed is a great timeline-based editor that also offers AI avatars. Veed has very fast video rendering and a large number of useful video editing tools.

It also has a huge AI Playground that gives you access to a bunch of different AI video models including Kling, Sora, Veo, and Seedance, as well as a number of AI image generation models.

Cons

I think the Veed avatar in my test video above has very limited expressiveness, with an emotional depth that is notably lower than the top-tier AI avatar generators. I also spotted that the avatar eye contact occasionally drifts.

Veed also has quite a small avatar library with only 60 stock options, and the custom avatar quality isn't great either.

If you're only interested in generating avatar videos and don't need all of the other functionality that Veed provides, then the UI of the Veed platform might feel a bit overwhelming.

Lastly, there's no free option for their AI dubbing tool, whereas Synthesia, HeyGen, and AI Studios all offer a freemium version of their tool.

My verdict

I think Veed is great for social media marketing, UGC creators, and marketers needing content repurposing tools, who also need some basic AI avatar functionality. It's definitely not the best option if you want highly realistic AI avatars.

How does Veed compare to HeyGen?

HeyGen, like Synthesia and Creatify, is an avatar-focused platform with expressive, lifelike presenters. Veed is primarily an AI-powered, timeline-based video editor with an AI avatar feature added on.

That results in a pretty clear set of differentiators. If your priority is avatar quality, then stick with one of the avatar-first platforms. If you value B-roll control, video composition, editing, and repurposing, and avatar realism is a secondary concern, then Veed is a great choice.

5. Elai

URL: https://elai.io/

Quick summary

- Avatar realism: Medium/low realism

- Lip-sync accuracy: Medium accuracy

- Voice quality: Average

- Best use case: Content repurposing

- Biggest limitation: Robotic AI voices

My experience

Pros

The first thing I noticed while testing out Elai was that the video rendering speed is extremely fast. I generated the above video in only 1 minute and 34 seconds, so it's definitely one of the fastest AI video tools I've ever used.

The Elai platform offers a range of document-to-video automation flows that give you plenty of options for converting existing content into avatar-driven videos.

I tried the workflows for URL-to-video and PowerPoint-to-video and they did a pretty good job of preserving the key points of my original content, although the videos generated would definitely need a bunch of editing.

Like Synthesia and Colossyan, Elai also offers a number of interactive learning features including clickable links, branching logic, Q&A sessions, and SCORM export support, so I think it hits at least the minimum requirements for the L&D and training use case.

Cons

Looking at my test video, I think it's clear that Elai's avatar realism and expressiveness aren't that great. I can see video compositing issues around the avatar's hair which make the avatar look somewhat 'cut out' from the background.

The quality of the AI voices is also underwhelming. I found the delivery in my test video to be emotionally flat, and the delivery was even more robotic in the Spanish version of my test video.

Elai doesn't offer much in terms of B-roll options, since the platform doesn't have any integrated AI video generation models like Sora or Veo.

During my testing I experienced a bug when trying to generate my Spanish test video. The video generation kept failing despite the fact that I definitely had enough credits left. I'm not sure why this happened, but it seems like there are some video generation reliability issues.

My verdict

Elai has useful document-to-video workflows and some features that are useful for training and L&D, but is let down by a low level of avatar realism and poor-quality voices.

How does Elai compare to HeyGen?

Elai has much weaker avatar realism than HeyGen. I don't think there is an obvious use case for which I would choose to use Elai over the other top-tier AI avatar generation platforms (Synthesia, Creatify, or HeyGen) in this list.

6. Colossyan

URL: https://www.colossyan.com/

Quick summary

- Avatar realism: Low realism

- Lip-sync accuracy: Medium accuracy

- Voice quality: High

- Best use case: Corporate training

- Biggest limitation: Weak avatar expressiveness

My experience

Pros

Colossyan, like Synthesia, targets enterprise avatar use cases, with a particular focus on L&D and training.

There's lots of support for interactivity - you can add quizzes and branching scenarios to your avatar videos, there are more advanced features like completion analytics and LMS integrations, and you can also export your videos to your LMS via SCORM.

The Colossyan platform uses a slide-based editor that is similar to PowerPoint with a clean and easy-to-use interface. It lets you do limited exports in 4K quality on their free plan, which is very generous.

The AI voices in Colossyan in general are of a high standard.

Cons

While Colossyan's avatar quality has improved a lot recently, it's still not good enough in my opinion. Looking at my test video above I think that the avatar's body movement is rigid and the gestures are often very unnatural and don't align with the tone of my script.

During my testing I had some issues with rendering. Both the English and Spanish versions of my video got stuck at 79% during the rendering process, so there seems to be an issue with reliability there.

I also noticed some minor audio artifacts and a slightly more robotic tone in the Spanish version when compared to the English, so it feels like you might need to prepare for a reduction in quality if you plan on using Colossyan for multilingual videos.

Lastly, I found the supporting media assets and B-roll options to be a lot more limited in Colossyan. There are no integrated AI video generation models for generating B-roll, and the music library is pretty limited too.

My verdict

Colossyan is very geared towards training and L&D teams, but I feel like the avatar realism is poor enough that it would be a distraction for me if I was a learner trying to complete a Colossyan-made learning module, so I don't think I can recommend it for that use case.

How does Colossyan compare to HeyGen?

I think that it's clear from the test videos that HeyGen's avatars are more realistic than Colossyan's.

However, the Colossyan platform is better aligned for training and L&D use cases than HeyGen's. Both platforms offer features like SCORM exports and interactivity, but Colossyan also offers features like completion analytics and a number of LMS integrations, which probably makes it a better fit for structured learning.

I think that if I was looking for avatar-driven AI video for training purposes, I'd probably choose Colossyan over HeyGen, whereas I'd prefer HeyGen over Colossyan for broader marketing and creator use cases.

Kyle Odefey is a London-based filmmaker and Video Editor at Synthesia. His content has reached millions across TikTok, LinkedIn, and YouTube, even inspiring an SNL sketch, and has been featured by CNBC, BBC, Forbes, and MIT Technology Review.

Frequently asked questions

What is the best HeyGen alternative for training videos and L&D teams?

Synthesia is the strongest option for L&D use cases. It's used by over 90% of the Fortune 100 and offers robust interactivity and translation features, as well as SCORM exports, LMS integrations, completion analytics, and branching quizzes. Colossyan is also worth considering, but is let down by its weak avatar realism.

What is the best HeyGen alternative for UGC-style ads?

Creatify is the clear choice for UGC-style ads, since the platform is built entirely around this use case. Creatify's avatars are very realistic and match the style of real UGC content. Creatify also offers a lot of performance marketing functionality including batch ad creation, A/B testing, ad analytics, and direct publishing to social media ad platforms.

What is the best free HeyGen alternative?

All the platforms on this list offer free plans, although they come with limitations. Synthesia offers 10 minutes of free video generation and AI dubbing per month, as well as free AI video generation of up to 25 assets in their AI Playground.

What is the best HeyGen alternative for AI video translation?

Both Synthesia and AI Studios offer free AI dubbing tools which you can use to translate an existing video into a different language while preserving the original speakers voice and lip sync. Check out this roundup of the best AI video translators to see how they compare.