5 L&D Trends Shaping 2026 (And How to Act on Them)

Support skill development in the flow of work with AI video.

Every L&D event I've attended this year has been shrouded in AI. It's inescapable. Even conversations that "aren't about AI" end up with someone talking about AI.

I get it.

Executives report anxiety permeating their organizations about AI, whether that stems from concern about AI taking jobs or concerns that AI mandates would lead to efficiency traps.

L&D managers and instructional designers express similar concerns, focusing on the quality of AI outputs and the perception of AI displacing L&D's relevance.

These shared concerns are understandable. They prompt questions like, if employees are just going to LLMs to "learn", what is the role of L&D in the workplace in 2026?

That's something I have a few thoughts on. Ultimately, it all comes back to people.

Every trend I'm seeing can be distilled into how we can better equip our people.

Not people as some platitude in a company's mission statement. But people as the ones making the judgment calls on how we work, learn, and grow.

Trend 1: Anchoring technology acquisition in human connection

Do we really need more technology?

That’s probably a question you think about whenever you see something shiny and consider adding it to your L&D tech stack.

We’re all inundated with new technology that we have to or should use. (I know, I know, this is coming from someone working at a tech company.)

Increasingly, however, I’m seeing L&D practitioners reorienting their approach to technology acquisition by asking:

How am I going to use this technology to build connections at work?

That's the reframe Christine Armstrong proposed at Learning Tech (and that I'm starting to see across decision-making). Here’s why. Every year, the Edelman Trust Institute conducts a survey and publishes their annual Trust Barometer. This year, they found an increased retreat into insularity, which is widening the gaps in trust, even in the workplace. As people retreat into the comfort of familiarity, asking them to adopt new technology is an uphill battle.

This data points to something important for L&D leaders: new technology is being met with skepticism, especially when it is an AI tool. You need employees to trust that your tooling investment is going to improve the quality of their work, but more importantly, that it is going to help them build better relationships at work.

How to act on this trend

What do I mean by build better relationships? Let’s take a use case you’re probably familiar with — onboarding. Let’s say you’re responsible for running a global onboarding program. You’ve received feedback in onboarding surveys that new hires struggle to connect with their teams across functions and regions. So how do you do that at scale?

Maybe you turn to technology for support, whether that’s to design and implement an onboarding buddy program or for a more lightweight randomized coffee chat.

When you evaluate technology through this lens of building connection, you are shifting the conversation from something that they have to use to something that works for them. That’s what earns trust.

Tip: When you're looking for buy-in from stakeholders on a new tool, don't come in with the shiny new tool. Come in with the problem you're solving. For example, "we’re helping new hires build deeper connections to their colleagues so they can solve problems more easily, stay more engaged, and drive business impact.”

Trend 2: Prioritizing precision over breadth when designing with AI

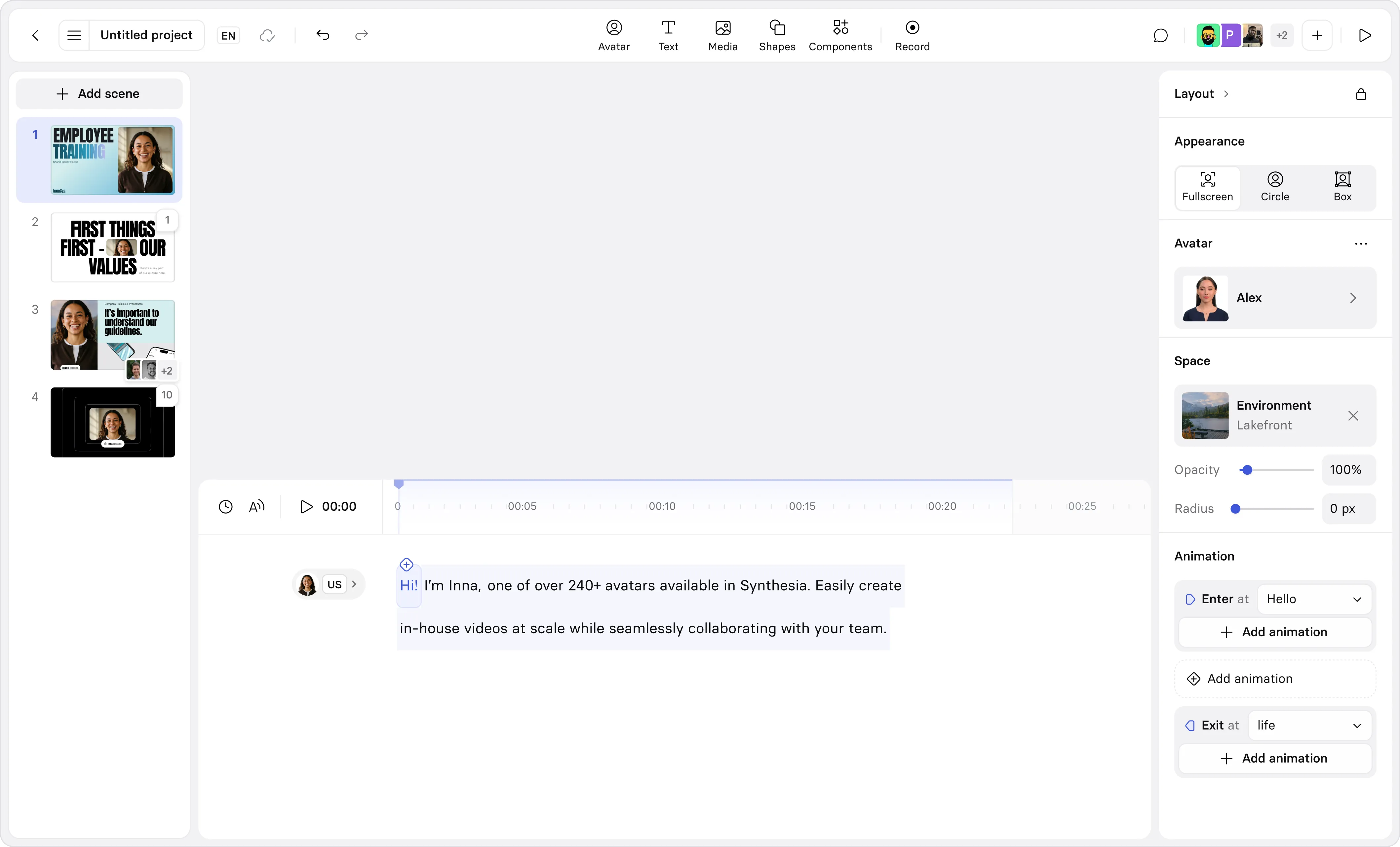

In our AI in Learning & Development Report (2026), 84% of over 400 L&D practitioners shared they’re using AI to speed up content production. It is easier than ever to create content, at scale, especially with tools like Synthesia’s AI video generator.

Unfortunately, the ability to create content so easily is leading to AI slop, and something I call the readiness debt. We’re seeing a deluge of content factories, churning out new training with little time for evaluation and measurement of learning objectives.

That’s why teams are pausing to reevaluate how they design with AI, prioritizing precision over breadth. In one example I heard at a conference, a medical enterprise shared how they reimagined their manager development program.

After reviewing post-program feedback, they realized managers were still struggling with difficult conversations, like delivering critical feedback or defusing conflict. So instead of delivering more content, they prioritized building a program focused on practice and feedback loops (with AI). They had one problem, one learning intervention, and precise measurement goals. That’s what I mean by precision over breadth.

My colleague, Elly Henriksen, an instructional designer, put this best: the difference between good and great is human. You have to include quality guardrails in your design loops, which means identifying where humans retain agency in the workflows.

This will likely slow you down. But that’s intended. Targeted learning interventions that have clear measurement goals will be more impactful than generic content delivered at scale.

How to act on this trend

Retaining your agency in an AI-assisted workflow isn’t about adding more unnecessary work. Instead, it is about serving as a gatekeeper of judgment.

Robert Blumofe, an MIT-trained computer scientist, explained why this is so essential in a way that resonates with me. He writes that we aren’t adding a human-in-the-loop to create friction, because that’s just “solving an AI weakness with a human weakness.”

For L&D that means we have to design workflows where humans align on things like the learning objectives, the audience, and how to measure success. It also means giving feedback to your AI tools when needed to maintain the high bar.

💡Tip: I break down how to set and uphold standards in depth in this post on AI in instructional design.

Trend 3: Owning your organization’s knowledge architecture

Most L&D teams have access to an organizational gold mine: institutional knowledge. Unfortunately, that knowledge is often siloed in an LMS or another tool where it can't be surfaced or contribute to the AI knowledge layer your organization is trying to build.

Earlier, I mentioned how I heard L&D leaders fretting that LLMs would make them obsolete. But L&D has an advantage over LLMs, and that's knowing how to translate expertise into structured knowledge.

As organizations struggle to identify who owns what when it comes to AI tools, L&D has an opportunity to take ownership over this crucial knowledge architecture. Here’s why.

When organizations acquire an AI tool, they often integrate it into their tech stack, allowing it to search across tools like messaging platforms, intranets, project management tools, and more. Nonetheless, these tools struggle to surface verified, relevant, and contextual knowledge. You know, things that an SME would rubber stamp in a content review.

How to act on this trend

There are four common types of knowledge organizations care about:

- Tacit knowledge: knowledge gained from personal experience, like how decisions actually get made, what a customer really cares about, or what good looks like in your organization

- Internal proprietary knowledge: the knowledge that every corporate slide deck warns you not to share

- Verified open source knowledge: publicly available information your organization has vetted and endorsed

- Publisher-licensed knowledge: the knowledge you're already paying to access like content libraries

While there are different methods to document this knowledge, they all share one common workflow: first identifying a source, then verifying its accuracy before determining it is appropriate for specific audiences (with the proper context). Essentially, conducting a needs analysis.

For tacit knowledge, that might look like building a library of lunch and learns or post-mortem sessions with recorded transcripts. Or partnering with HR Business Partners to transform exit interviews into knowledge collection opportunities. These can also be automated through conversational AI agents that interview and capture expertise before it walks out the door.

With internal proprietary knowledge, your job is curation: auditing what already exists, identifying what's current, and retiring anything outdated before it gets ingested into an AI tool and becomes a liability.

With verified open source and publisher-licensed knowledge, L&D acts as the editorial layer, ensuring what's in your knowledge base is credible, contextually relevant, and accessible to your AI tools.

Trend 4: Grounding your skills strategy in behavior change

There's a lot of buzz about how organizations need upskilling or reskilling strategies to stay competitive, especially with AI. I've read at least half a dozen articles proclaiming that if L&D just focused on skills, all of their problems would be solved.

Clearly, these consultants have never had to build a skills taxonomy. If they had, they would know how hard it can be to get cross-functional buy-in.

And that's before you've even gotten to the truly hard part: developing an objective way to document and train skills by focusing on key situations and behaviors, and anchoring learning experiences in demonstrating those behaviors through practice and feedback.

L&D practitioners who are successfully grounding their skills strategy in behavioral change are focusing on two things:

- The behavior they're trying to model

- How that behavior impacts the business's bottom line

Both of those things matter for the same reason: they make the conversation immediately relevant to whoever you're talking to because you're speaking their language. And they keep the work grounded in something measurable, which is the only way a skills strategy survives long enough to matter.

How to act on this trend

Identify roles where leaders already make judgment calls about readiness or progression (e.g., promoting someone from an IC to a manager or lead). Then clarify the behaviors that matter most for someone making that transition. From there, design one learning experience built around practicing those behaviors with real-time feedback, and decide upfront what observable evidence constitutes improvement.

Let's say you're focusing on someone being promoted from an IC to a people manager. Where do new people managers struggle in your organization? Is it delivering feedback? Delegating tasks? Handling criticism or pushback? Pick one that surfaces across engagement survey data or other sources, and design the learning experience around it.

Then, and most importantly, decouple it from your performance management processes. Don't build a skills taxonomy that someone will be measured against, but rather something they can use to deliberately develop in line with organizational needs.

When employees trust that a skills framework exists to help them grow, adoption follows.

Trend 5: Imagining an L&D tech stack without an LMS

Is this finally the end of the LMS?

As I’ve previously shared, I’m no soothsayer (I was, however, an English major). I can’t predict the demise of the LMS with any certainty, as much as I would like to, and I know others have surely tried.

In our survey of 400+ L&D practitioners late last year, only 47% of respondents said they think the LMS will be the backbone of their tech stack in three years. I understand the sentiment, but I'm skeptical of the timeline.

That being said, I’m seeing genuine progress towards a truly headless platform with products like XCL. That's exciting. Because the truth is, most LMS architectures are fundamentally misaligned with how people engage with training.

If someone is a frontline worker on a tarmac at an airport, and they need to verify a safety measure based on a training that was just released, what are they going to do? Go digging through an LMS app that needs two factor authentication for them to access and then click through a course?

Of course not. They're going to search for the answer wherever it's fastest, even if the information they receive is outdated or inaccurate.

We would all benefit from richer data and more robust analytics, which most LMS and SCORM packages can't provide. A truly headless solution would allow us to deliver learning experiences where it makes the most sense — whether that be a video, eLearning course, or in-person workshop — and track detailed inputs correlated to our learning outcomes. That's something I can get behind.

How to act on this trend

I wouldn’t recommend ripping out your LMS tomorrow (especially if it houses critical compliance tracking). Instead, I’d audit high impact training delivered asynchronously. Something where the stakes are high, but the feedback isn’t what you want. Maybe only 25% of your employees are watching a video about an important process, but you’re not sure why you’re losing them because SCORM doesn’t track that level of detail.

Then, try to pilot an alternative. Consider using xAPI to deliver the video in the flow of work and see what the behavior is. Is it the same? If so, you’ll need to dig into the analytics to redesign the training. If not, then the delivery is the issue. Either way, you’ll have the insights you need to develop more impactful training.

And perhaps eventually, you can make the case internally for where to cut the friction out of your tech stack.

Where to start

The most strategic thing any L&D leader can do right now is to focus their attention. Pick one trend that resonates with you (or something else entirely). Then identify the friction it addresses in your organization, and start there.

And if you want to compare notes on what is or isn’t working, find me on LinkedIn. I’d love to chat.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

What are the most important learning and development trends for 2026?

The most important L&D trends for 2026 reflect a shift in expectations rather than a surge of new tools. As AI becomes a normal part of L&D workflows, organizations are increasingly focused on skills, performance, and measurable impact.

Mature L&D teams are designing learning systems that build capability in context, support performance in the flow of work, and hold up under scale. AI is an enabler in this shift, but differentiation now comes from learning design quality, governance, and how closely learning is tied to business outcomes.

How is AI changing learning and development in 2026?

AI is reducing the cost and time required to create and adapt learning content, while enabling more personalized learning experiences. But the biggest change is not speed.

In 2026, AI is pushing L&D teams to rethink how learning supports skill development and performance. The teams seeing the most impact are using AI to support diagnosis and guided practice rather than content production alone.

What does L&D maturity mean?

L&D maturity describes how effectively a learning function translates activity into real capability and performance.

Most models describe a similar spectrum: at one end, L&D is reactive, compliance-led and disconnected from business strategy. At the other, it's strategic and data-driven, designing learning systems around skills and outcomes that the business already tracks.

Maturity shows up in how L&D prioritizes work, how closely it partners with the business, and whether it can demonstrate impact beyond completion rates.

What should L&D teams measure beyond completion rates?

Completion rates and satisfaction scores no longer tell the full story.

In 2026, L&D teams are expanding measurement to include behavior change and business metrics: whether learning is helping people do their jobs more effectively and adapt as roles evolve. The goal is to measure what matters for performance.

How can L&D partner with other functions to drive business impact?

Driving business impact requires close partnership with functions like IT, security, legal, HR, and business leadership. These partnerships ensure that learning initiatives are secure, ethical, and aligned with real organizational needs.

In 2026, one of the clearest signs of L&D maturity is the ability to work cross-functionally to design learning systems that balance performance, responsibility, and human judgment.

content